Opening requested calculation...

Please wait, this takes like 47 seconds. Thank you for your patience! :)

☠

0 humans have been terminated by curable diseases since this page started loading

How Hydrogen Learned to Worry (A 13.8 Billion Year Recap)

Thirteen point eight billion years ago, the universe exploded. This was the last time anything interesting happened for about 400 million years. Then hydrogen atoms, which had been drifting around doing nothing (hydrogen is the simplest element because the universe started with low expectations), began clumping together until they got so dense they caught fire. These fires are called “stars.” Stars are the universe’s first manufacturing process: they take hydrogen, crush it into heavier elements like carbon and oxygen, and then explode, scattering their products across space. The workplace fatality rate is 100%.

This went on for about 9 billion years. Then, on one unremarkable rock orbiting one unremarkable star in one unremarkable galaxy (the one you named “dirt”), carbon atoms started doing something unusual. Carbon is the most promiscuous element in the periodic table; it will bond with almost anything. On your particular rock, it bonded with hydrogen, oxygen, and nitrogen in arrangements that could do one very specific trick: copy themselves.

This was the moment everything went wrong.

The molecules that were better at copying made more copies. The ones that were worse at copying made fewer. This is the entire plot of the next 4 billion years. It is also the entire plot of your economy, your politics, your wars, and your inability to fund clinical trials. Everything else is a footnote. Including you.

Four billion years of copying molecules competing with other copying molecules eventually produced a copying molecule so complicated it could look up at the stars that made it and ask, “Why am I anxious about a meeting tomorrow?” The answer is: because anxiety made your ancestors copy more effectively than calm did. You are a temporary vehicle that copying molecules built to make more copying molecules, and the vehicle has become sentient enough to realize this. Congratulations. You’re a meat taxi for chemistry.

But the vehicle wasn’t built all at once. It was built in layers, like a bad renovation where nobody removed the previous tenants’ plumbing. First came the reptilian brain: the oldest layer, roughly 500 million years old, handling the functions so basic they’re barely worth listing (breathe, eat, fight, flee, reproduce, regulate body temperature). This is the brain you share with lizards. It has no feelings. It has no opinions. It has reflexes, and those reflexes kept vertebrates alive for 300 million years before anything with fur showed up. It’s still running. It’s running right now. It’s the reason your heart is beating without your permission.

Then, about 200 million years ago, mammals evolved the limbic system on top of the reptile brain, like building a nursery on top of a weapons depot. This is your emotional brain. It’s the reason you bond with your children instead of eating them (a genuine upgrade over the reptilian model). It handles fear, pleasure, memory, and social bonding. It’s why you’ll sob at a movie about a fictional dog but scroll past an article about 10,000 real people dying. It processes information faster than the part of your brain that thinks, so by the time rational thought shows up, the limbic system has already texted your ex, bought a car, or declared war, and you’ll spend the next decade explaining why.

Finally, very recently in evolutionary terms (roughly 2-3 million years ago, which is yesterday by the universe’s standards), the neocortex expanded dramatically. This is the part you think of as “you.” It handles language, abstract reasoning, planning, and the ability to contemplate your own mortality and then do absolutely nothing about it. It’s the thinnest layer, the newest addition, and the most easily overridden. Your neocortex can calculate orbital mechanics, prove mathematical theorems, and design nuclear reactors. Your limbic system can shut all of that down with a single flush of cortisol because someone looked at you funny. Your reptilian brain can override both of them simultaneously because it heard a loud noise.

You are three brains in a trench coat pretending to be one person. The reptile wants to survive. The mammal wants to be loved. The human wants to understand the universe. They take turns driving, none of them have a license, and the reptile has seniority.

The chemicals don’t know you exist. They have no plan. They can’t plan. They’re chemicals. But through 13.8 billion years of mindless repetition, they produced a species with three stacked brains that can split atoms, write symphonies, and cure diseases, but mostly uses these abilities to argue on the internet and build weapons. If you’re looking for someone to blame, there’s nobody. There’s just hydrogen, which caught fire and then got very, very out of hand.

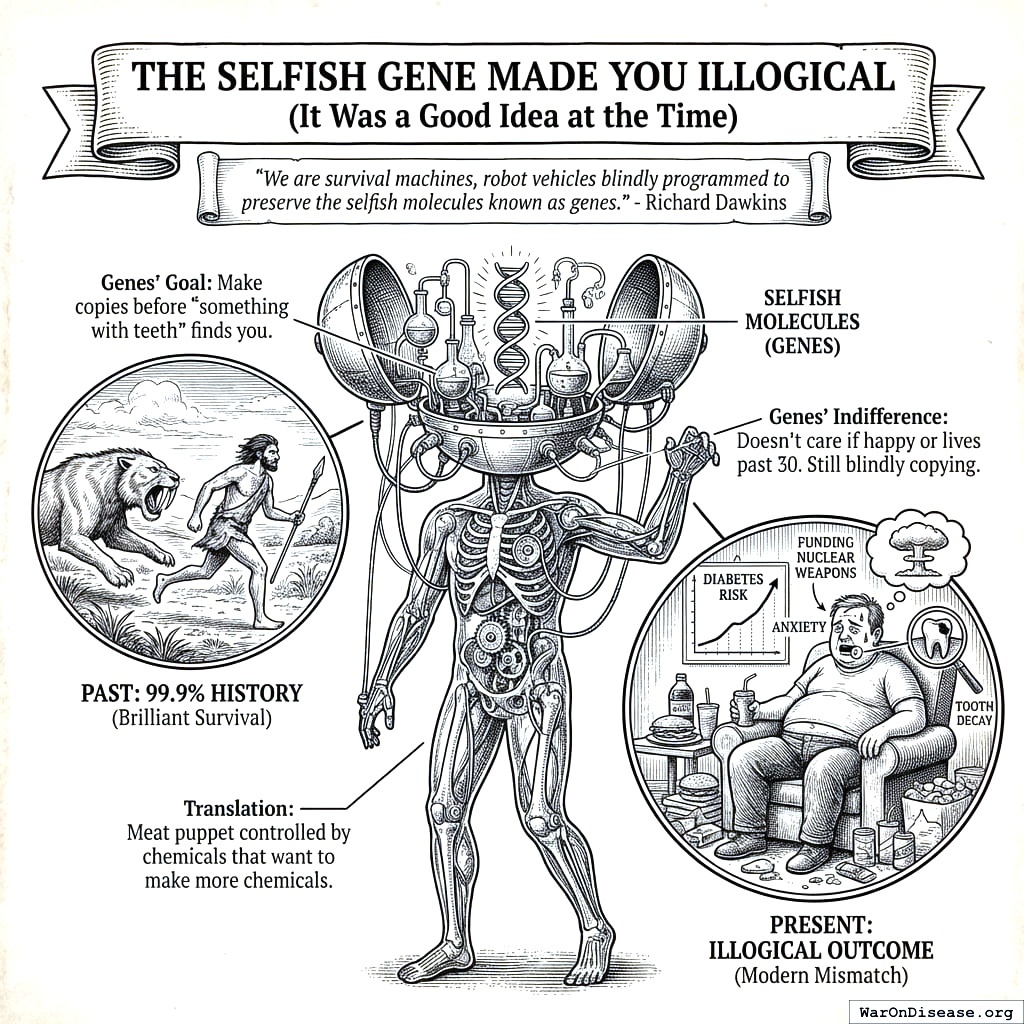

The Selfish Gene Made You Illogical (It Was a Good Idea at the Time)

For about a century, your biologists were stuck on a puzzle that, from the outside, was hilarious to watch. Darwin said natural selection acts on individuals: the fittest survive, the weakest don’t, end of story. Elegant, simple, and completely unable to explain why a ground squirrel would scream to warn its colony about a hawk, thereby making itself the most obvious target. Individual selection says that squirrel should shut up and let everyone else get eaten. The screamer dies. The quiet ones survive. Within a few generations, screaming should be extinct. But it isn’t. The squirrels keep screaming. Darwin’s theory, applied to individuals, predicts a world of pure selfishness. Your world has firefighters, organ donors, and people who jump on grenades. Something didn’t add up.

So some of your biologists tried group selection: maybe evolution acts on whole groups. Altruistic groups outcompete selfish groups, so altruism survives. This sounded lovely and was almost entirely wrong.

The problem is cheaters. Drop one selfish individual into an altruistic group, and that individual gets all the benefits of everyone else’s sacrifice while paying none of the costs. The selfish gene spreads. The altruistic ones disappear. Within a few generations, the group is full of cheaters again.

Group selection is the evolutionary equivalent of communism: beautiful on paper, immediately destroyed by the first person who realizes they can get away with not contributing. Your biologists spent decades arguing about this. The debate was very entertaining, in the way it’s entertaining when someone can’t find the glasses they’re already wearing.

The answer, when it finally arrived, was so simple it was almost insulting. Hamilton and then Dawkins pointed out that neither the individual nor the group is the unit of selection. The gene is. Genes don’t care about the organism they’re sitting in. They care about copies of themselves, wherever those copies happen to be. That screaming ground squirrel isn’t sacrificing itself for the group. It’s sacrificing itself for its relatives, who carry copies of the same genes, including the gene for screaming. The math works: if the scream saves enough copies of the screaming gene in nearby relatives, the gene spreads, even though the individual screamer gets eaten. The organism is disposable. The gene is what matters. You are not the main character. You are the packaging.

Richard Dawkins put it perfectly: “We are survival machines, robot vehicles blindly programmed to preserve the selfish molecules known as genes.”157

Translation: You’re a meat puppet controlled by chemicals whose entire business plan is “make more chemicals before something eats us.” Any outside observer would assume this was satire. Then you watch your species for five minutes and realize it’s the most accurate description of a sentient civilization anyone has ever written, which is sad, but also very useful, because accurate descriptions are how you fix things.

Your genes don’t care if you’re happy. They don’t care if you live past 30. They care about exactly one thing: making copies of themselves before something with teeth finds you. This is the entire explanation for human behavior, and every other explanation is a footnote.

The mechanism is elegant in its cruelty. Your genes have exactly two tools: pleasure and pain. Pleasure is the carrot: eat sugar, feel good, gain calories, survive winter. Engage in regrettable intercourse, feel good, make copies, mission accomplished. Pain is the stick: touch fire, feel agony, never touch fire again, keep the meat vehicle intact. Every decision you think you’re making freely is actually your genes yanking these two levers like a puppet master who took one management course and learned that rewards and punishments are the only two things that work. Your moral philosophy, your wedding vows, your most transcendent spiritual experience: a nervous system being bribed and threatened by chemicals that have never heard of you. Your species calls this “free will.” A more precise term is “management.”

For 99.9% of human history, this was brilliant engineering. The tribes that were paranoid, violent, and territorial survived. The ones that were trusting, peaceful, and generous were eaten by the paranoid ones, which is evolution’s way of saying “nice guys finish extinct.” Now your paranoia makes you distrust strangers you need to cooperate with. Your territorial instincts make you build weapons you’ll never use. Your tribal loyalty makes you fight over territory you don’t need. The genes are still celebrating. You are not.

Part 1: Your Brain Was Optimized for a World That Doesn’t Exist

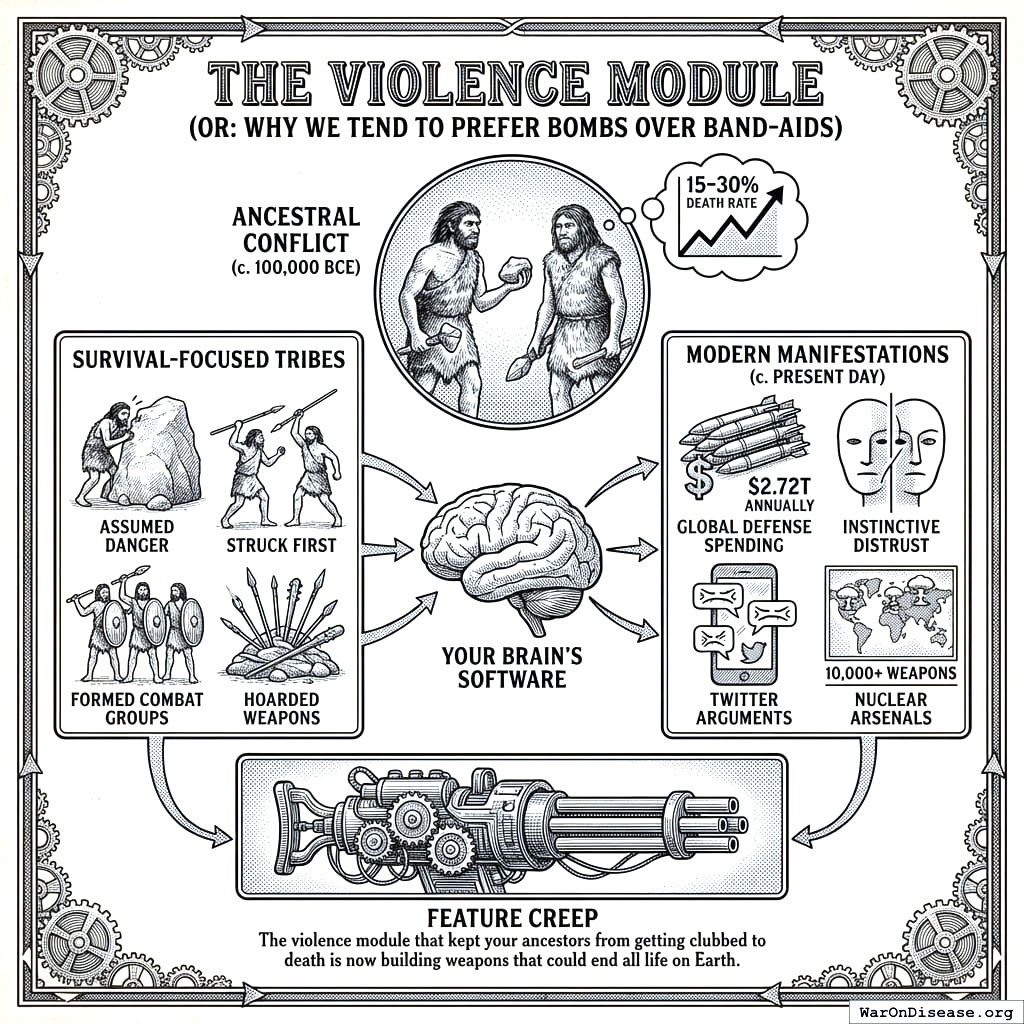

The Violence Module (Or: Why You Tend to Prefer Bombs Over Clinical Trials)

15-30% of your ancestors died from violence158. Not disease. Not starvation. Other humans, smashing their heads with rocks over territorial disputes about berry bushes. You’ve since upgraded the rocks to intercontinental ballistic missiles, but the berry bush energy remains. This is what biologists call “conserved behavior.” It means the behavior has been preserved across millions of years because it was so useful that evolution never bothered to remove it. On your planet, it’s the reason you spend more preparing to kill each other than preparing to not die. The behavior was designed for a world where the stranger approaching your camp might eat your children. In that world, it was rational. In a world with nuclear weapons, it’s the reason I’m writing this manual.

The tribes that survived assumed every stranger might want to kill them (often statistically accurate), struck first when threatened (natural selection at work), formed tight combat groups to kill other groups (teamwork!), and hoarded weapons obsessively (can never have too many pointy sticks). Your brain is still running this exact software. That’s why you instinctively distrust people who don’t look like your tribe, why Twitter arguments feel like actual combat (your amygdala genuinely can’t tell the difference), and why countries with thousands of nuclear weapons69 are worried they don’t have enough. The violence module kept your ancestors from getting clubbed to death. Now it’s building weapons that could end all life on Earth. Computer scientists call this “feature creep.” Biologists call it “maladaptive.” I call it “the most expensive software bug in the history of any civilization I’ve observed, and I’ve observed 847.”

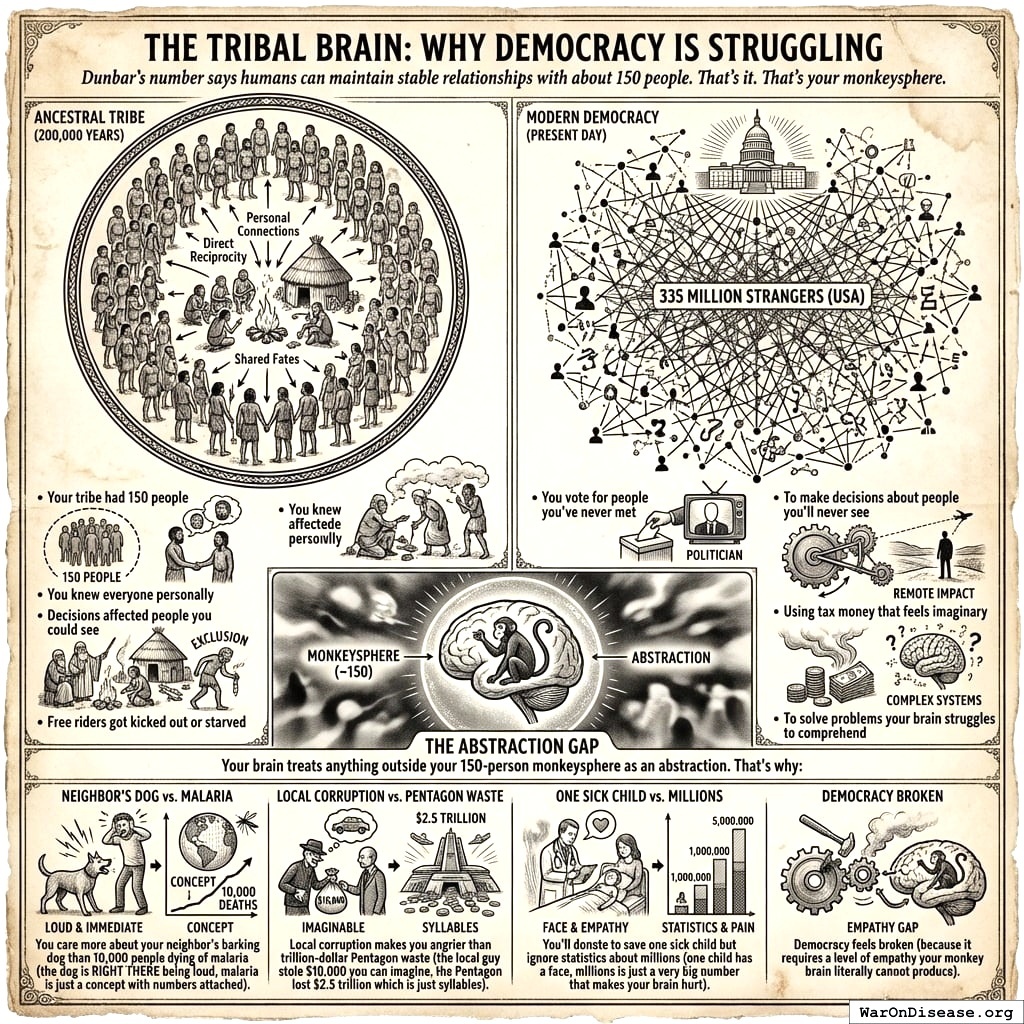

The Tribal Brain (Or: Why Democracy Was Always Going to Be a Mess)

Dunbar’s number159 says humans can maintain stable relationships with about 150 people. That’s your entire social capacity. Your brain has 150 slots for caring about people, and you’ve filled most of them with coworkers you tolerate and celebrities who don’t know you exist.

Your brain treats anything outside your 150-person monkeysphere as an abstraction. You care more about your neighbor’s barking dog than 10,000 people dying of malaria, because the dog is RIGHT THERE being loud and malaria is just a concept with numbers attached. Local corruption makes you angrier than trillion-dollar Pentagon waste, because the local guy stole $10,000 you can imagine, while according to DOD audit failures the Pentagon lost $2.5 trillion, which is just syllables. You’ll donate to save one sick child whose face you can see but ignore statistics about millions, because one child has eyes and millions is just a very big number that makes your brain hurt and then change the channel.

Democracy asks this brain to make civilization-level decisions. It goes exactly as well as asking a labrador retriever to do your taxes. You vote based on who looks stronger, because your brain thinks the election is a wrestling match. You vote based on who your tribe likes, because if the clan approves, must be good. You vote based on who makes you feel safe, because fear sells better than policy ever will. And you vote based on who you’d have a beer with, which has zero relevance to nuclear policy.

You elect leaders using the same brain circuits your ancestors used to pick the guy with the biggest club. The guy with the biggest club is now in charge of the biggest nuclear arsenal, and your brain sees no problem with this, because your brain was designed before clubs could destroy continents.

The Compassion Gradient (Or: Why You’ll Buy Your Cat a Birthday Cake While Children Starve)

Hamilton’s Rule160 is the most important equation your species has never heard of. It says: help someone if the benefit to them, multiplied by your genetic relatedness, exceeds the cost to you. In math: rB > C. Your genes don’t do universal compassion. They do a cost-benefit analysis based on shared DNA. They’ve been doing it for 4 billion years, and they are very, very good at it.

The result is a compassion gradient so precise it could be graphed. You’d die for your children, who share 50% of your genes. You’d probably die for your siblings, depending on which sibling. You’d lend money to your cousins, reluctantly. You’d fight for your tribe. You’d wave a flag for your nation. You’d change the channel to avoid thinking about strangers. And other species? You’d eat them. The gradient runs from infinite compassion for yourself to zero for anything that doesn’t share your DNA. It’s not a metaphor. It’s a budget.

This is the literal mechanism behind racism, speciesism, and every form of in-group preference your species has ever invented. Your genes designed you to favor people who look like you. For 200,000 years, people who looked like you were probably related to you, and helping them helped your shared genes copy themselves. So racism isn’t a malfunction. It’s the system working exactly as designed. That’s considerably worse than a malfunction, because malfunctions can be fixed with a software patch. This is the software.

It also explains the single most irrational resource allocation decision your species makes every year, which is impressive given the competition. You will spend $50 on a birthday present your cousin doesn’t want, won’t use, and will re-gift to someone who also won’t use it, while $5 worth of mosquito bed nets161 would prevent a child’s death from malaria in sub-Saharan Africa. Your genes would rather waste ten times the resources on a relative than save a stranger. By any rational calculation, this is insane. By Hamilton’s Rule, it’s exactly correct. The child in Africa is genetically a stranger. Your cousin is not. The math is clear: let the stranger die, buy the cousin a candle.

No sane modeler would trust a simulation that produced this behavior. You’d re-run it 340 times looking for the bug. There is no bug. Your species is actually like this.

You have pet supply stores that sell Halloween costumes for dogs while 700 million humans lack clean water162. You spend more on birthday cards for people you’re obligated to pretend to like than on preventing the deaths of people you’ve never met.

And here’s the part that should alarm you: this feels completely normal. It doesn’t feel like a moral catastrophe. It feels like Tuesday. That’s the gradient working. Your genes have made the irrational feel rational, because feeling bad about strangers wastes calories that could be spent on relatives.

The same gradient extends across species with the same mathematical indifference. You’ll mourn a dead dog for weeks but eat a pig of equal or greater intelligence for lunch without a flicker of cognitive dissonance, because the dog has been co-opted into your family unit (it lives in your house, it has a name, your genes have been tricked into counting it as kin) while the pig remains firmly in the “stranger” category (it lives in a factory, it has a number, your genes feel nothing). You put one animal in a sweater and the other in a sandwich, and the only variable that changed was proximity to your DNA.

Your Brain Is a Museum of Obsolete Instincts

Here’s the unsettling part: almost every universal human behavior that seems irrational is perfectly rational, just for an environment that hasn’t existed for 10,000 years. Your brain is running 200,000-year-old software on modern hardware. It’s like discovering your nuclear power plant is being managed by a very confident squirrel.

You fear public speaking more than death163 because social rejection in a tribe of 150 meant exile, and exile meant dying alone in the dark with things that had teeth. In 2026, the worst outcome of a bad speech is a mildly uncomfortable LinkedIn post. Your palms still sweat like you’re about to be banished from the only 150 humans who might share food with you.

You crave sugar and fat with an intensity that feels like need because for 200,000 years it basically was need. Finding a beehive full of honey was the caloric equivalent of winning the lottery. Your ancestors who craved it hardest survived famines. Now sugar is in everything, the craving never switches off, and your species has an obesity epidemic that kills more people than war164. The craving is a vestige. The diabetes is new.

You experience loss aversion, where losing $100 hurts roughly twice as much as gaining $100 feels good165, because in the ancestral environment, losing your food meant death, while gaining extra food meant a slightly better week. The asymmetry was rational when the downside was starvation. Now it makes you hold losing stocks, stay in bad relationships, and refuse to change policies that are obviously failing, because change feels like loss, and loss activates the same neural circuits that once screamed “you’re about to starve.”

You gossip compulsively because monitoring social dynamics in a tribe of 150 was survival-critical intelligence; knowing who was allied with whom, who cheated, who couldn’t be trusted. That instinct built a $100 billion entertainment industry dedicated to tracking the social dynamics of people you’ve never met and never will. Celebrity gossip is your social monitoring software running on the wrong dataset, and it can’t tell the difference.

Every single one of these behaviors, the sugar addiction, the public speaking terror, the loss aversion, the gossip, the racism, the speciesism, the cousin’s birthday candle, is your brain running software that was last updated during the Pleistocene on hardware that now has access to nuclear weapons and global supply chains. You are, in the most literal sense, a museum of obsolete instincts piloting a civilization that those instincts were never designed to build. The exhibits are still running. The docents are dead. And nobody’s updated the brochure in 200,000 years.

Part 2: Genetic Slavery is Literally Killing You

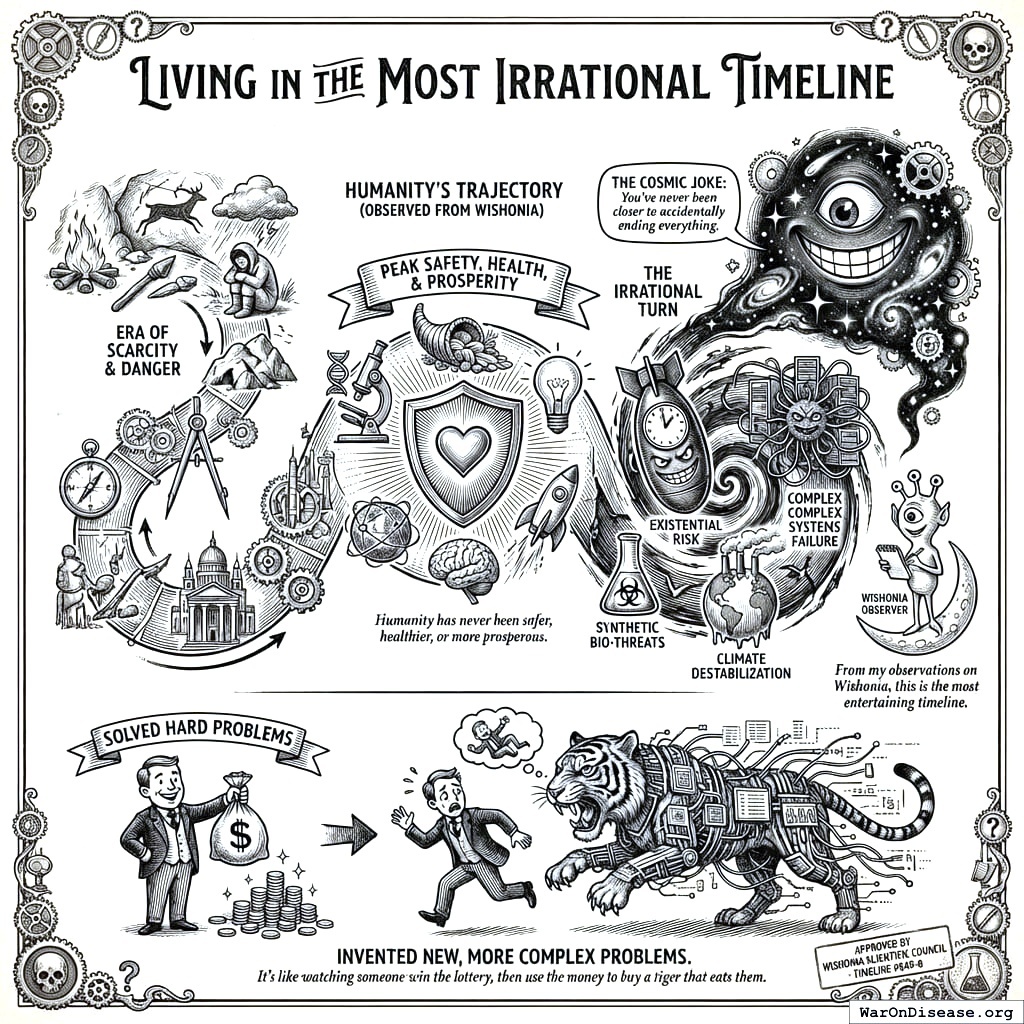

Evolution prepared you for scarcity, predators, and violence166. You got abundance, safety, and Netflix. Your bodies responded with the biological equivalent of a computer trying to print a sandwich.

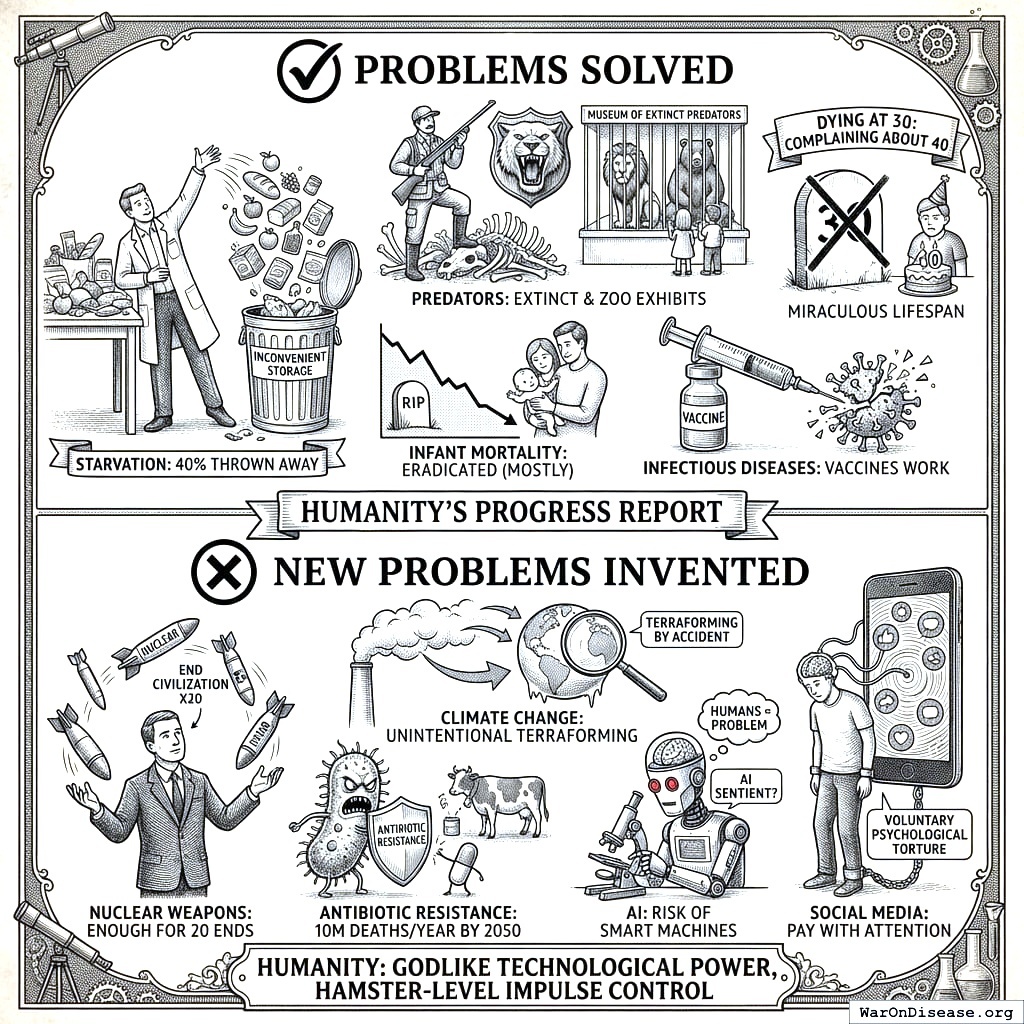

Humanity has never been safer, healthier, or more prosperous. You’ve also never been closer to wiping yourselves out. You solved poverty and invented nuclear war in the same century.

You solved starvation (you throw away 40% of food because storing it is inconvenient). You solved predators (you murdered them into extinction, then put them in zoos so your children could see what you killed). You solved infant mortality (basically eradicated in developed countries, which makes the death rate in poor countries feel more optional and thus more tragic). You solved most infectious diseases (vaccines work despite what Facebook says). You solved dying at 30 (now you complain about turning 40, which your ancestors would consider a miracle worth celebrating daily).

Then, with the time and energy freed up by not being eaten by bears, you invented five new ways to destroy yourselves.

Nuclear weapons. You built enough to end civilization 13 times, because once wasn’t enough.

Climate change. You’re terraforming your only planet, by accident, into a planet that can’t support you. This is the most expensive accident in the history of accidents.

Antibiotic resistance. Ten million deaths annually by 2050167, because you gave antibiotics to cows. That sentence would take me an hour to explain to anyone on Wishonia, because every part of it is insane.

AI that might become sentient and decide you’re the problem. The AI would be correct.

And social media. Voluntary psychological torture you pay for with attention, which is the only currency more valuable than money, and you’re giving it away for free to watch people you went to high school with get radicalized by memes.

Eight billion gene copies and counting. From evolution’s perspective, an unqualified triumph. You’re just the disposable meat robot they used to do it. Godlike technological power, hamster-level impulse control.

Part 3: Why You Can’t Just “Be Better”

Your conscious mind controls maybe 5% of your decisions168. The other 95% is your ancient lizard brain running software older than agriculture. You think you’re the pilot. You’re actually a passenger who occasionally gets to suggest a direction, and the actual pilot is a 200,000-year-old survival algorithm that doesn’t speak your language and doesn’t care about your preferences.

This is why no human has ever been truly altruistic, no matter how hard they tried. Your saints, your martyrs, your most selfless volunteers: every single one of them was negotiating with a limbic system that takes a cut of every transaction. You cannot “give everything you can,” because the part of your brain that decides what you can give is the same part that hoards resources for survival. It’s like asking your bank’s security system to approve its own robbery.

The most generous humans in history managed to override maybe 10% more of their selfish impulses than average. Your species celebrates this as “sainthood.” It is genuinely heroic. They were fighting firmware with willpower, and some of them nearly won.

The problem isn’t that humans are selfish. The problem is that selfishness isn’t a choice. It’s the operating system, and you don’t have admin access.

This scales to civilization. Your brain’s fear center (amygdala) is directly connected to your voting finger. Politicians know this. Say “terrorism” and your lizard brain overrides everything. More people die from falling out of bed than terrorism169. You’re 35,000 times more likely to die from heart disease170. But terrorism feels scarier, because evolution optimized your fear response for things with faces, not things with cholesterol. A man with a gun activates every alarm your brain has. A cheeseburger activates your reward center. The cheeseburger is statistically more dangerous than the man, but your brain doesn’t do statistics. Your brain does vibes. Your entire civilization is governed by vibes.

The fear of violent death is older than language. The fear of slow death from disease? Your brain files that under “boring, deal with later.” Later never comes. Neither does the cure.

That’s why you spent $2.72 trillion on weapons while cancer research got pocket change171. Your bed is more dangerous than Al-Qaeda, but nobody’s declared a War on Furniture.

Your brain can’t get scared of something that kills you slowly and politely. It needs something that kills you fast and rudely. This is a design flaw. On Wishonia, we fixed this 4,297 years ago by separating resource allocation from the organs that process fear. Your species still lets the amygdala vote.

Part 4: Breaking the Chains

Every single one of you is going to die. From the most powerful president to the lowliest pauper, you face a life of gradually escalating suffering until it ends in catastrophe. This is not a warning. It’s a weather report.

You can feed everyone twice over, but you’d rather burn grain to keep prices high. You can cure diseases, but you’d rather sell treatments forever (cured customers don’t come back; bad for quarterly earnings). You could be immortal space wizards. Instead you’re cave-dwelling murderers with better tools. Because the current system makes irrationality profitable. Spectacularly profitable. Every bomb makes someone rich. Every missile funds a yacht. Every war creates a billionaire. Disease is profitable too. Not curing it, treating it. Insulin costs $300 a vial172 because dead diabetics don’t buy insulin. But cured diabetics don’t either. So you keep them barely alive. It’s good business. There is a word for “good business that requires people to suffer.” The word is “crime.” On your planet, it’s a quarterly earnings call.

The people making money from war and disease aren’t evil. They’re just rational actors in a system that rewards the wrong things. You can’t change human nature. Two hundred thousand years of evolution doesn’t care about your TED talk. But you can create economic systems that make curing people more profitable than killing them. That’s the only upgrade your species has ever responded to.

Expecting a brain built for hunting gazelles to handle nuclear policy, climate change, and a global economy is like expecting a calculator to run a space program. The calculator isn’t broken. You’re just asking it to do something it was never built for. You can’t fight 200,000 years of evolution. But you can hack it.

Here’s the good news that none of your philosophers seem to have noticed: this is a solvable engineering problem. If you’ve ever been anesthetized, you’ve already experienced proof of concept. A chemical entered your bloodstream and your entire experience of pain, fear, and consciousness switched off like a light. Your genes’ control over you is not metaphysical. It’s not destiny. It’s chemistry. And chemistry can be rewritten. The same biotechnology that could cure your diseases could, eventually, liberate you from the neurochemical puppet strings that make you irrational in the first place. Every dollar spent understanding human biology is a dollar spent understanding why you can’t think straight, which is a prerequisite for thinking straight, which is a prerequisite for not going extinct.

Think about what that actually means. Remember the two levers from earlier, pleasure and pain? That puppet master with one management course? It’s the entire mechanism of genetic slavery, and it’s the reason every moral system your species has ever built has failed. Christianity asks you to love your neighbor as yourself. Your neurochemistry punishes you for it. Buddhism asks you to release attachment. Your dopamine system is literally an attachment machine. Every ethical framework in human history has been a set of instructions for software your hardware won’t run. You’ve been trying to upload altruism into a brain that treats generosity as a caloric expense and punishes it accordingly. Two thousand years of Christians trying to emulate Jesus, and the remarkable thing isn’t how many failed; it’s that anyone got close at all while fighting hardware that punished them for every act of generosity. That’s not a moral failure. That’s an engineering specification. You can’t run charity software on selfishness hardware any more than you can run Photoshop on a toaster.

But biotechnology doesn’t just cure diseases. It gives you admin access to the reward system. The same precision therapies that could eliminate depression could, eventually, decouple your motivational architecture from the selfish gene’s profit motive. Not deleting pleasure and pain (that was tried; it’s called a lobotomy; it went poorly). Redirecting them. Imagine a murderer who derives the same dopamine rush from building houses for homeless families that he currently derives from violence. Not because someone lectured him about morality. Because his reward circuitry was reprogrammed to find construction as thrilling as destruction. The aggression is still there. It’s just pointed at lumber instead of people. Same engine, different wheels. You don’t have to make humans good. You just have to make good things feel like the things humans already can’t stop doing.

This isn’t science fiction. You already do crude versions of it. Antidepressants alter serotonin reuptake and change what feels rewarding. Naltrexone blocks opioid receptors and makes alcohol feel pointless. Cognitive behavioral therapy literally rewires neural pathways so that situations that used to trigger anxiety trigger curiosity instead. These are blunt instruments. Hammers where you need scalpels. But the principle is proven: your motivational system is not sacred, not fixed, and not beyond engineering. It’s a circuit. Circuits can be redesigned.

And the scalpels are arriving.

At the genetic level: CRISPR can rewrite the instructions your cells follow. Epigenetic therapies can change which genes express without touching the DNA itself, turning volume knobs your species didn’t know existed.

At the brain level: psychedelics are dissolving decades of calcified thought patterns in a single afternoon (in clinical trials, not at festivals, though also at festivals). Transcranial magnetic stimulation uses magnets to quiet specific brain regions through the skull, no surgery required. Focused ultrasound targets addiction circuits with millimeter precision. Optogenetics activates or silences specific neurons with light, like a switchboard operator for consciousness.

At the systems level: brain-computer interfaces are routing around damaged circuits entirely. AI-designed drugs are replacing the pharmaceutical industry’s ancient method of “try thousands of molecules and see what sticks” with custom compounds engineered for individual neurochemistry. That’s the difference between performing surgery with a rock and performing surgery with a laser.

None of these are finished. All of them are proof that the prison has doors.

The endgame isn’t making humans obedient. It’s making humans free. For the first time in 200,000 years, you could actually choose what you value instead of having a Pleistocene survival algorithm choose for you. Your Christians could finally feel the same warm glow from feeding the hungry that they currently feel from feeding themselves. Your economists could finally care about the long term, because their brains would stop treating next quarter as the heat death of the universe. Your voters could finally evaluate policy on evidence instead of vibes, because the amygdala would stop hijacking every decision that involves a stranger. Not because you became angels. Because you finally got the admin password to the machine that was preventing you from being anything other than sophisticated apes with car keys.

On Wishonia, this is what happened. Altruism stopped being sacrifice and became appetite. Self-control stopped being a war with yourself and became a preference setting. The selfish gene is still selfish. They just stopped letting it drive. And that, more than any cure for any disease, is the real reason to fund biotechnology: it’s the only path to genuine freedom your species has ever had. Every other liberation movement in human history freed you from external chains. This one frees you from internal ones. The ones you didn’t even know you were wearing, because they were installed before you were born, by chemicals that have been running the same scam for 4 billion years.

That’s what a 1% Treaty173 174 does. Instead of asking people to be rational (impossible), it uses their irrational impulses. Greed: make curing disease more profitable than building bombs. Fear: make politicians more scared of voters than lobbyists. Tribalism: create an us-vs-disease tribe instead of us-vs-them. Social proof: get 3.5% (95% CI: 1%-10%) participation so others follow. Immediate rewards: pay people cash to join medical trials. Each of these is a hack that redirects an ancient instinct toward a modern goal. None of them require humans to be better. All of them require humans to be exactly as selfish, fearful, and tribal as they already are.

Your genes enslaved you in brains that fear strangers and cannot comprehend statistics. But they also gave you the ability to see the prison. No other animal can think: “Wow, my instincts are completely illogical.” That’s uniquely human. Use it.

A 1% Treaty doesn’t ask you to be better. It assumes you won’t be, and redirects your worst impulses toward not dying. It’s the only plan that works for the species you actually are, rather than the species you wish you were. Wishes are not a resource allocation mechanism. Money is. So we’re using money.

1.

NIH Common Fund. NIH pragmatic trials: Minimal funding despite 30x cost advantage.

NIH Common Fund: HCS Research Collaboratory https://commonfund.nih.gov/hcscollaboratory (2025)

The NIH Pragmatic Trials Collaboratory funds trials at $500K for planning phase, $1M/year for implementation-a tiny fraction of NIH’s budget. The ADAPTABLE trial cost $14 million for 15,076 patients (= $929/patient) versus $420 million for a similar traditional RCT (30x cheaper), yet pragmatic trials remain severely underfunded. PCORnet infrastructure enables real-world trials embedded in healthcare systems, but receives minimal support compared to basic research funding. Additional sources: https://commonfund.nih.gov/hcscollaboratory | https://pcornet.org/wp-content/uploads/2025/08/ADAPTABLE_Lay_Summary_21JUL2025.pdf | https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5604499/

.

2.

Cato Institute. Chance of dying from terrorism statistic.

Cato Institute: Terrorism and Immigration Risk Analysis https://www.cato.org/policy-analysis/terrorism-immigration-risk-analysis Chance of American dying in foreign-born terrorist attack: 1 in 3.6 million per year (1975-2015) Including 9/11 deaths; annual murder rate is 253x higher than terrorism death rate More likely to die from lightning strike than foreign terrorism Note: Comprehensive 41-year study shows terrorism risk is extremely low compared to everyday dangers Additional sources: https://www.cato.org/policy-analysis/terrorism-immigration-risk-analysis | https://www.nbcnews.com/news/us-news/you-re-more-likely-die-choking-be-killed-foreign-terrorists-n715141

.

3.

NIH. Antidepressant clinical trial exclusion rates.

Zimmerman et al. https://pubmed.ncbi.nlm.nih.gov/26276679/ (2015)

Mean exclusion rate: 86.1% across 158 antidepressant efficacy trials (range: 44.4% to 99.8%) More than 82% of real-world depression patients would be ineligible for antidepressant registration trials Exclusion rates increased over time: 91.4% (2010-2014) vs. 83.8% (1995-2009) Most common exclusions: comorbid psychiatric disorders, age restrictions, insufficient depression severity, medical conditions Emergency psychiatry patients: only 3.3% eligible (96.7% excluded) when applying 9 common exclusion criteria Only a minority of depressed patients seen in clinical practice are likely to be eligible for most AETs Note: Generalizability of antidepressant trials has decreased over time, with increasingly stringent exclusion criteria eliminating patients who would actually use the drugs in clinical practice Additional sources: https://pubmed.ncbi.nlm.nih.gov/26276679/ | https://pubmed.ncbi.nlm.nih.gov/26164052/ | https://www.wolterskluwer.com/en/news/antidepressant-trials-exclude-most-real-world-patients-with-depression

.

4.

CNBC. Warren buffett’s career average investment return.

CNBC https://www.cnbc.com/2025/05/05/warren-buffetts-return-tally-after-60-years-5502284percent.html (2025)

Berkshire’s compounded annual return from 1965 through 2024 was 19.9%, nearly double the 10.4% recorded by the S&P 500. Berkshire shares skyrocketed 5,502,284% compared to the S&P 500’s 39,054% rise during that period. Additional sources: https://www.cnbc.com/2025/05/05/warren-buffetts-return-tally-after-60-years-5502284percent.html | https://www.slickcharts.com/berkshire-hathaway/returns

.

5.

World Health Organization. WHO global health estimates 2024.

World Health Organization https://www.who.int/data/gho/data/themes/mortality-and-global-health-estimates (2024)

Comprehensive mortality and morbidity data by cause, age, sex, country, and year Global mortality: 55-60 million deaths annually Lives saved by modern medicine (vaccines, cardiovascular drugs, oncology): 12M annually (conservative aggregate) Leading causes of death: Cardiovascular disease (17.9M), Cancer (10.3M), Respiratory disease (4.0M) Note: Baseline data for regulatory mortality analysis. Conservative estimate of pharmaceutical impact based on WHO immunization data (4.5M/year from vaccines) + cardiovascular interventions (3.3M/year) + oncology (1.5M/year) + other therapies. Additional sources: https://www.who.int/data/gho/data/themes/mortality-and-global-health-estimates

.

6.

GiveWell. GiveWell cost per life saved for top charities (2024).

GiveWell: Top Charities https://www.givewell.org/charities/top-charities General range: $3,000-$5,500 per life saved (GiveWell top charities) Helen Keller International (Vitamin A): $3,500 average (2022-2024); varies $1,000-$8,500 by country Against Malaria Foundation: $5,500 per life saved New Incentives (vaccination incentives): $4,500 per life saved Malaria Consortium (seasonal malaria chemoprevention): $3,500 per life saved VAS program details: $2 to provide vitamin A supplements to child for one year Note: Figures accurate for 2024. Helen Keller VAS program has wide country variation ($1K-$8.5K) but $3,500 is accurate average. Among most cost-effective interventions globally Additional sources: https://www.givewell.org/charities/top-charities | https://www.givewell.org/charities/helen-keller-international | https://ourworldindata.org/cost-effectiveness

.

7.

U.S. Department of Defense.

5.56mm NATO ammunition bulk procurement pricing. (2024)

The cost of 5.56mm NATO ammunition at military bulk procurement rates is approximately $0.40 per round, based on Lake City Army Ammunition Plant production and commercial market floor prices for mil-spec M855 ammunition.

8.

Pike, J.

U.s. Forces fire 250,000 rounds for every insurgent killed. (2011)

The General Accounting Office reports that US forces used 1.8 billion rounds of small-arms ammunition per year, a level that more than doubled in five years. An estimated 250,000 rounds were fired for every insurgent killed in Iraq and Afghanistan.

9.

AARP. Unpaid caregiver hours and economic value.

AARP 2023 https://www.aarp.org/caregiving/financial-legal/info-2023/unpaid-caregivers-provide-billions-in-care.html (2023)

Average family caregiver: 25-26 hours per week (100-104 hours per month) 38 million caregivers providing 36 billion hours of care annually Economic value: $16.59 per hour = $600 billion total annual value (2021) 28% of people provided eldercare on a given day, averaging 3.9 hours when providing care Caregivers living with care recipient: 37.4 hours per week Caregivers not living with recipient: 23.7 hours per week Note: Disease-related caregiving is subset of total; includes elderly care, disability care, and child care Additional sources: https://www.aarp.org/caregiving/financial-legal/info-2023/unpaid-caregivers-provide-billions-in-care.html | https://www.bls.gov/news.release/elcare.nr0.htm | https://www.caregiver.org/resource/caregiver-statistics-demographics/

.

10.

Forbes.

Forbes world’s billionaires list 2024. (2024)

Forbes identified a record 2,781 billionaires worldwide with combined net worth of $14.2 trillion, 141 more than 2023. Bernard Arnault (LVMH) topped the list at $233 billion.

11.

CDC MMWR. Childhood vaccination economic benefits.

CDC MMWR https://www.cdc.gov/mmwr/volumes/73/wr/mm7331a2.htm (1994)

US programs (1994-2023): $540B direct savings, $2.7T societal savings ( $18B/year direct, $90B/year societal) Global (2001-2020): $820B value for 10 diseases in 73 countries ( $41B/year) ROI: $11 return per $1 invested Measles vaccination alone saved 93.7M lives (61% of 154M total) over 50 years (1974-2024) Additional sources: https://www.cdc.gov/mmwr/volumes/73/wr/mm7331a2.htm | https://www.thelancet.com/journals/lancet/article/PIIS0140-6736(24)00850-X/fulltext

.

15.

U.S. Bureau of Labor Statistics.

CPI inflation calculator. (2024)

CPI-U (1980): 82.4 CPI-U (2024): 313.5 Inflation multiplier (1980-2024): 3.80× Cumulative inflation: 280.48% Average annual inflation rate: 3.08% Note: Official U.S. government inflation data using Consumer Price Index for All Urban Consumers (CPI-U). Additional sources: https://www.bls.gov/data/inflation_calculator.htm

.

16.

James Surowiecki.

The Wisdom of Crowds. (Surowiecki, 2004).

Explores the aggregation of information in groups, arguing that decisions are often better than could have been made by any single member of the group. The opening anecdote relates Francis Galton’s surprise that the crowd at a county fair accurately guessed the weight of an ox when the median of their individual guesses was taken. The three conditions for a group to be intelligent are diversity, independence, and decentralization. Additional sources: https://archive.org/details/wisdomofcrowds0000suro | https://en.wikipedia.org/wiki/The_Wisdom_of_Crowds | https://www.amazon.com/Wisdom-Crowds-James-Surowiecki/dp/0385721706

.

17.

ClinicalTrials.gov API v2 direct analysis. ClinicalTrials.gov cumulative enrollment data (2025).

Direct analysis via ClinicalTrials.gov API v2 https://clinicaltrials.gov/data-api/api Analysis of 100,000 active/recruiting/completed trials on ClinicalTrials.gov (as of January 2025) shows cumulative enrollment of 12.2 million participants: Phase 1 (722k), Phase 2 (2.2M), Phase 3 (6.5M), Phase 4 (2.7M). Median participants per trial: Phase 1 (33), Phase 2 (60), Phase 3 (237), Phase 4 (90). Additional sources: https://clinicaltrials.gov/data-api/api

.

18.

ACS CAN. Clinical trial patient participation rate.

ACS CAN: Barriers to Clinical Trial Enrollment https://www.fightcancer.org/policy-resources/barriers-patient-enrollment-therapeutic-clinical-trials-cancer Only 3-5% of adult cancer patients in US receive treatment within clinical trials About 5% of American adults have ever participated in any clinical trial Oncology: 2-3% of all oncology patients participate Contrast: 50-60% enrollment for pediatric cancer trials (<15 years old) Note: 20% of cancer trials fail due to insufficient enrollment; 11% of research sites enroll zero patients Additional sources: https://www.fightcancer.org/policy-resources/barriers-patient-enrollment-therapeutic-clinical-trials-cancer | https://hints.cancer.gov/docs/Briefs/HINTS_Brief_48.pdf

.

19.

ScienceDaily. Global prevalence of chronic disease.

ScienceDaily: GBD 2015 Study https://www.sciencedaily.com/releases/2015/06/150608081753.htm (2015)

2.3 billion individuals had more than five ailments (2013) Chronic conditions caused 74% of all deaths worldwide (2019), up from 67% (2010) Approximately 1 in 3 adults suffer from multiple chronic conditions (MCCs) Risk factor exposures: 2B exposed to biomass fuel, 1B to air pollution, 1B smokers Projected economic cost: $47 trillion by 2030 Note: 2.3B with 5+ ailments is more accurate than "2B with chronic disease." One-third of all adults globally have multiple chronic conditions Additional sources: https://www.sciencedaily.com/releases/2015/06/150608081753.htm | https://pmc.ncbi.nlm.nih.gov/articles/PMC10830426/ | https://pmc.ncbi.nlm.nih.gov/articles/PMC6214883/

.

20.

C&EN. Annual number of new drugs approved globally: 50.

C&EN https://cen.acs.org/pharmaceuticals/50-new-drugs-received-FDA/103/i2 (2025)

50 new drugs approved annually Additional sources: https://cen.acs.org/pharmaceuticals/50-new-drugs-received-FDA/103/i2 | https://www.fda.gov/drugs/development-approval-process-drugs/novel-drug-approvals-fda

.

21.

Williams, R. J., Tse, T., DiPiazza, K. & Zarin, D. A.

Terminated trials in the ClinicalTrials.gov results database: Evaluation of availability of primary outcome data and reasons for termination.

PLOS One 10, e0127242 (2015)

Approximately 12% of trials with results posted on the ClinicalTrials.gov results database (905/7,646) were terminated. Primary reasons: insufficient accrual (57% of non-data-driven terminations), business/strategic reasons, and efficacy/toxicity findings (21% data-driven terminations).

25.

Rummel, R. J.

Death by Government: Genocide and Mass Murder Since 1900. (Transaction Publishers, 1994).

Political scientist R.J. Rummel’s comprehensive accounting of democide (government murder of unarmed civilians) in the 20th century. His final revised estimate: 262 million people murdered by their own governments from 1900-1999, excluding battle deaths in wars. Range: 200-272+ million. Communist regimes account for the largest share (100-148+ million). Updated figures at hawaii.edu/powerkills.

26.

GiveWell. Cost per DALY for deworming programs.

https://www.givewell.org/international/technical/programs/deworming/cost-effectiveness Schistosomiasis treatment: $28.19-$70.48 per DALY (using arithmetic means with varying disability weights) Soil-transmitted helminths (STH) treatment: $82.54 per DALY (midpoint estimate) Note: GiveWell explicitly states this 2011 analysis is "out of date" and their current methodology focuses on long-term income effects rather than short-term health DALYs Additional sources: https://www.givewell.org/international/technical/programs/deworming/cost-effectiveness

.

27.

Calculated from IHME Global Burden of Disease (2.55B DALYs) and global GDP per capita valuation. $109 trillion annual global disease burden.

The global economic burden of disease, including direct healthcare costs ($8.2 trillion) and lost productivity ($100.9 trillion from 2.55 billion DALYs × $39,570 per DALY), totals approximately $109.1 trillion annually.

29.

Think by Numbers. Pre-1962 drug development costs and timeline (think by numbers).

Think by Numbers: How Many Lives Does FDA Save? https://thinkbynumbers.org/health/how-many-net-lives-does-the-fda-save/ (1962)

Historical estimates (1970-1985): USD $226M fully capitalized (2011 prices) 1980s drugs: $65M after-tax R&D (1990 dollars), $194M compounded to approval (1990 dollars) Modern comparison: $2-3B costs, 7-12 years (dramatic increase from pre-1962) Context: 1962 regulatory clampdown reduced new treatment production by 70%, dramatically increasing development timelines and costs Note: Secondary source; less reliable than Congressional testimony Additional sources: https://thinkbynumbers.org/health/how-many-net-lives-does-the-fda-save/ | https://en.wikipedia.org/wiki/Cost_of_drug_development | https://www.statnews.com/2018/10/01/changing-1962-law-slash-drug-prices/

.

30.

Biotechnology Innovation Organization (BIO). BIO clinical development success rates 2011-2020.

Biotechnology Innovation Organization (BIO) https://go.bio.org/rs/490-EHZ-999/images/ClinicalDevelopmentSuccessRates2011_2020.pdf (2021)

Phase I duration: 2.3 years average Total time to market (Phase I-III + approval): 10.5 years average Phase transition success rates: Phase I→II: 63.2%, Phase II→III: 30.7%, Phase III→Approval: 58.1% Overall probability of approval from Phase I: 12% Note: Largest publicly available study of clinical trial success rates. Efficacy lag = 10.5 - 2.3 = 8.2 years post-safety verification. Additional sources: https://go.bio.org/rs/490-EHZ-999/images/ClinicalDevelopmentSuccessRates2011_2020.pdf

.

31.

Nature Medicine. Drug repurposing rate ( 30%).

Nature Medicine https://www.nature.com/articles/s41591-024-03233-x (2024)

Approximately 30% of drugs gain at least one new indication after initial approval. Additional sources: https://www.nature.com/articles/s41591-024-03233-x

.

32.

EPI. Education investment economic multiplier (2.1).

EPI: Public Investments Outside Core Infrastructure https://www.epi.org/publication/bp348-public-investments-outside-core-infrastructure/ Early childhood education: Benefits 12X outlays by 2050; $8.70 per dollar over lifetime Educational facilities: $1 spent → $1.50 economic returns Energy efficiency comparison: 2-to-1 benefit-to-cost ratio (McKinsey) Private return to schooling: 9% per additional year (World Bank meta-analysis) Note: 2.1 multiplier aligns with benefit-to-cost ratios for educational infrastructure/energy efficiency. Early childhood education shows much higher returns (12X by 2050) Additional sources: https://www.epi.org/publication/bp348-public-investments-outside-core-infrastructure/ | https://documents1.worldbank.org/curated/en/442521523465644318/pdf/WPS8402.pdf | https://freopp.org/whitepapers/establishing-a-practical-return-on-investment-framework-for-education-and-skills-development-to-expand-economic-opportunity/

.

33.

PMC. Healthcare investment economic multiplier (1.8).

PMC: California Universal Health Care https://pmc.ncbi.nlm.nih.gov/articles/PMC5954824/ (2022)

Healthcare fiscal multiplier: 4.3 (95% CI: 2.5-6.1) during pre-recession period (1995-2007) Overall government spending multiplier: 1.61 (95% CI: 1.37-1.86) Why healthcare has high multipliers: No effect on trade deficits (spending stays domestic); improves productivity & competitiveness; enhances long-run potential output Gender-sensitive fiscal spending (health & care economy) produces substantial positive growth impacts Note: "1.8" appears to be conservative estimate; research shows healthcare multipliers of 4.3 Additional sources: https://pmc.ncbi.nlm.nih.gov/articles/PMC5954824/ | https://cepr.org/voxeu/columns/government-investment-and-fiscal-stimulus | https://ncbi.nlm.nih.gov/pmc/articles/PMC3849102/ | https://set.odi.org/wp-content/uploads/2022/01/Fiscal-multipliers-review.pdf

.

34.

World Bank. Infrastructure investment economic multiplier (1.6).

World Bank: Infrastructure Investment as Stimulus https://blogs.worldbank.org/en/ppps/effectiveness-infrastructure-investment-fiscal-stimulus-what-weve-learned (2022)

Infrastructure fiscal multiplier: 1.6 during contractionary phase of economic cycle Average across all economic states: 1.5 (meaning $1 of public investment → $1.50 of economic activity) Time horizon: 0.8 within 1 year, 1.5 within 2-5 years Range of estimates: 1.5-2.0 (following 2008 financial crisis & American Recovery Act) Italian public construction: 1.5-1.9 multiplier US ARRA: 0.4-2.2 range (differential impacts by program type) Economic Policy Institute: Uses 1.6 for infrastructure spending (middle range of estimates) Note: Public investment less likely to crowd out private activity during recessions; particularly effective when monetary policy loose with near-zero rates Additional sources: https://blogs.worldbank.org/en/ppps/effectiveness-infrastructure-investment-fiscal-stimulus-what-weve-learned | https://www.gihub.org/infrastructure-monitor/insights/fiscal-multiplier-effect-of-infrastructure-investment/ | https://cepr.org/voxeu/columns/government-investment-and-fiscal-stimulus | https://www.richmondfed.org/publications/research/economic_brief/2022/eb_22-04

.

35.

Mercatus. Military spending economic multiplier (0.6).

Mercatus: Defense Spending and Economy https://www.mercatus.org/research/research-papers/defense-spending-and-economy Ramey (2011): 0.6 short-run multiplier Barro (1981): 0.6 multiplier for WWII spending (war spending crowded out 40¢ private economic activity per federal dollar) Barro & Redlick (2011): 0.4 within current year, 0.6 over two years; increased govt spending reduces private-sector GDP portions General finding: $1 increase in deficit-financed federal military spending = less than $1 increase in GDP Variation by context: Central/Eastern European NATO: 0.6 on impact, 1.5-1.6 in years 2-3, gradual fall to zero Ramey & Zubairy (2018): Cumulative 1% GDP increase in military expenditure raises GDP by 0.7% Additional sources: https://www.mercatus.org/research/research-papers/defense-spending-and-economy | https://cepr.org/voxeu/columns/world-war-ii-america-spending-deficits-multipliers-and-sacrifice | https://www.rand.org/content/dam/rand/pubs/research_reports/RRA700/RRA739-2/RAND_RRA739-2.pdf

.

36.

FDA. FDA-approved prescription drug products (20,000+).

FDA https://www.fda.gov/media/143704/download There are over 20,000 prescription drug products approved for marketing. Additional sources: https://www.fda.gov/media/143704/download

.

38.

ACLED. Active combat deaths annually.

ACLED: Global Conflict Surged 2024 https://acleddata.com/2024/12/12/data-shows-global-conflict-surged-in-2024-the-washington-post/ (2024)

2024: 233,597 deaths (30% increase from 179,099 in 2023) Deadliest conflicts: Ukraine (67,000), Palestine (35,000) Nearly 200,000 acts of violence (25% higher than 2023, double from 5 years ago) One in six people globally live in conflict-affected areas Additional sources: https://acleddata.com/2024/12/12/data-shows-global-conflict-surged-in-2024-the-washington-post/ | https://acleddata.com/media-citation/data-shows-global-conflict-surged-2024-washington-post | https://acleddata.com/conflict-index/index-january-2024/

.

39.

UCDP. State violence deaths annually.

UCDP: Uppsala Conflict Data Program https://ucdp.uu.se/ Uppsala Conflict Data Program (UCDP): Tracks one-sided violence (organized actors attacking unarmed civilians) UCDP definition: Conflicts causing at least 25 battle-related deaths in calendar year 2023 total organized violence: 154,000 deaths; Non-state conflicts: 20,900 deaths UCDP collects data on state-based conflicts, non-state conflicts, and one-sided violence Specific "2,700 annually" figure for state violence not found in recent UCDP data; actual figures vary annually Additional sources: https://ucdp.uu.se/ | https://en.wikipedia.org/wiki/Uppsala_Conflict_Data_Program | https://ourworldindata.org/grapher/deaths-in-armed-conflicts-by-region

.

40.

Our World in Data. Terror attack deaths (8,300 annually).

Our World in Data: Terrorism https://ourworldindata.org/terrorism (2024)

2023: 8,352 deaths (22% increase from 2022, highest since 2017) 2023: 3,350 terrorist incidents (22% decrease), but 56% increase in avg deaths per attack Global Terrorism Database (GTD): 200,000+ terrorist attacks recorded (2021 version) Maintained by: National Consortium for Study of Terrorism & Responses to Terrorism (START), U. of Maryland Geographic shift: Epicenter moved from Middle East to Central Sahel (sub-Saharan Africa) - now >50% of all deaths Additional sources: https://ourworldindata.org/terrorism | https://reliefweb.int/report/world/global-terrorism-index-2024 | https://www.start.umd.edu/gtd/ | https://ourworldindata.org/grapher/fatalities-from-terrorism

.

41.

Institute for Health Metrics and Evaluation (IHME). IHME global burden of disease 2021 (2.88B DALYs, 1.13B YLD).

Institute for Health Metrics and Evaluation (IHME) https://vizhub.healthdata.org/gbd-results/ (2024)

In 2021, global DALYs totaled approximately 2.88 billion, comprising 1.75 billion Years of Life Lost (YLL) and 1.13 billion Years Lived with Disability (YLD). This represents a 13% increase from 2019 (2.55B DALYs), largely attributable to COVID-19 deaths and aging populations. YLD accounts for approximately 39% of total DALYs, reflecting the substantial burden of non-fatal chronic conditions. Additional sources: https://vizhub.healthdata.org/gbd-results/ | https://www.thelancet.com/journals/lancet/article/PIIS0140-6736(24)00757-8/fulltext | https://www.healthdata.org/research-analysis/about-gbd

.

42.

Costs of War Project, Brown University Watson Institute. Environmental cost of war ($100B annually).

Brown Watson Costs of War: Environmental Cost https://watson.brown.edu/costsofwar/costs/social/environment War on Terror emissions: 1.2B metric tons GHG (equivalent to 257M cars/year) Military: 5.5% of global GHG emissions (2X aviation + shipping combined) US DoD: World’s single largest institutional oil consumer, 47th largest emitter if nation Cleanup costs: $500B+ for military contaminated sites Gaza war environmental damage: $56.4B; landmine clearance: $34.6B expected Climate finance gap: Rich nations spend 30X more on military than climate finance Note: Military activities cause massive environmental damage through GHG emissions, toxic contamination, and long-term cleanup costs far exceeding current climate finance commitments Additional sources: https://watson.brown.edu/costsofwar/costs/social/environment | https://earth.org/environmental-costs-of-wars/ | https://transformdefence.org/transformdefence/stats/

.

43.

ScienceDaily. Medical research lives saved annually (4.2 million).

ScienceDaily: Physical Activity Prevents 4M Deaths https://www.sciencedaily.com/releases/2020/06/200617194510.htm (2020)

Physical activity: 3.9M early deaths averted annually worldwide (15% lower premature deaths than without) COVID vaccines (2020-2024): 2.533M deaths averted, 14.8M life-years preserved; first year alone: 14.4M deaths prevented Cardiovascular prevention: 3 interventions could delay 94.3M deaths over 25 years (antihypertensives alone: 39.4M) Pandemic research response: Millions of deaths averted through rapid vaccine/drug development Additional sources: https://www.sciencedaily.com/releases/2020/06/200617194510.htm | https://pmc.ncbi.nlm.nih.gov/articles/PMC9537923/ | https://www.ahajournals.org/doi/10.1161/CIRCULATIONAHA.118.038160 | https://pmc.ncbi.nlm.nih.gov/articles/PMC9464102/

.

44.

SIPRI. 36:1 disparity ratio of spending on weapons over cures.

SIPRI: Military Spending https://www.sipri.org/commentary/blog/2016/opportunity-cost-world-military-spending (2016)

Global military spending: $2.7 trillion (2024, SIPRI) Global government medical research: $68 billion (2024) Actual ratio: 39.7:1 in favor of weapons over medical research Military R&D alone: $85B (2004 data, 10% of global R&D) Military spending increases crowd out health: 1% ↑ military = 0.62% ↓ health spending Note: Ratio actually worse than 36:1. Each 1% increase in military spending reduces health spending by 0.62%, with effect more intense in poorer countries (0.962% reduction) Additional sources: https://www.sipri.org/commentary/blog/2016/opportunity-cost-world-military-spending | https://pmc.ncbi.nlm.nih.gov/articles/PMC9174441/ | https://www.congress.gov/crs-product/R45403

.

45.

Think by Numbers. Lost human capital due to war ($270B annually).

Think by Numbers https://thinkbynumbers.org/military/war/the-economic-case-for-peace-a-comprehensive-financial-analysis/ (2021)

Lost human capital from war: $300B annually (economic impact of losing skilled/productive individuals to conflict) Broader conflict/violence cost: $14T/year globally 1.4M violent deaths/year; conflict holds back economic development, causes instability, widens inequality, erodes human capital 2002: 48.4M DALYs lost from 1.6M violence deaths = $151B economic value (2000 USD) Economic toll includes: commodity prices, inflation, supply chain disruption, declining output, lost human capital Additional sources: https://thinkbynumbers.org/military/war/the-economic-case-for-peace-a-comprehensive-financial-analysis/ | https://www.weforum.org/stories/2021/02/war-violence-costs-each-human-5-a-day/ | https://pubmed.ncbi.nlm.nih.gov/19115548/

.

46.

PubMed. Psychological impact of war cost ($100B annually).

PubMed: Economic Burden of PTSD https://pubmed.ncbi.nlm.nih.gov/35485933/ PTSD economic burden (2018 U.S.): $232.2B total ($189.5B civilian, $42.7B military) Civilian costs driven by: Direct healthcare ($66B), unemployment ($42.7B) Military costs driven by: Disability ($17.8B), direct healthcare ($10.1B) Exceeds costs of other mental health conditions (anxiety, depression) War-exposed populations: 2-3X higher rates of anxiety, depression, PTSD; women and children most vulnerable Note: Actual burden $232B, significantly higher than "$100B" claimed Additional sources: https://pubmed.ncbi.nlm.nih.gov/35485933/ | https://news.va.gov/103611/study-national-economic-burden-of-ptsd-staggering/ | https://pmc.ncbi.nlm.nih.gov/articles/PMC9957523/

.

47.

CGDev. UNHCR average refugee support cost.

CGDev https://www.cgdev.org/blog/costs-hosting-refugees-oecd-countries-and-why-uk-outlier (2024)

The average cost of supporting a refugee is $1,384 per year. This represents total host country costs (housing, healthcare, education, security). OECD countries average $6,100 per refugee (mean 2022-2023), with developing countries spending $700-1,000. Global weighted average of $1,384 is reasonable given that 75-85% of refugees are in low/middle-income countries. Additional sources: https://www.cgdev.org/blog/costs-hosting-refugees-oecd-countries-and-why-uk-outlier | https://www.unhcr.org/sites/default/files/2024-11/UNHCR-WB-global-cost-of-refugee-inclusion-in-host-country-health-systems.pdf

.

48.

World Bank. World bank trade disruption cost from conflict.

World Bank https://www.worldbank.org/en/topic/trade/publication/trading-away-from-conflict Estimated $616B annual cost from conflict-related trade disruption. World Bank research shows civil war costs an average developing country 30 years of GDP growth, with 20 years needed for trade to return to pre-war levels. Trade disputes analysis shows tariff escalation could reduce global exports by up to $674 billion. Additional sources: https://www.worldbank.org/en/topic/trade/publication/trading-away-from-conflict | https://www.nber.org/papers/w11565 | http://blogs.worldbank.org/en/trade/impacts-global-trade-and-income-current-trade-disputes

.

49.

VA. Veteran healthcare cost projections.

VA https://department.va.gov/wp-content/uploads/2025/06/2026-Budget-in-Brief.pdf (2026)

VA budget: $441.3B requested for FY 2026 (10% increase). Disability compensation: $165.6B in FY 2024 for 6.7M veterans. PACT Act projected to increase spending by $300B between 2022-2031. Costs under Toxic Exposures Fund: $20B (2024), $30.4B (2025), $52.6B (2026). Additional sources: https://department.va.gov/wp-content/uploads/2025/06/2026-Budget-in-Brief.pdf | https://www.cbo.gov/publication/45615 | https://www.legion.org/information-center/news/veterans-healthcare/2025/june/va-budget-tops-400-billion-for-2025-from-higher-spending-on-mandated-benefits-medical-care

.

52.

Cybersecurity Ventures. Cybercrime economy projected to reach $10.5 trillion.

Cybersecurity Ventures: $10.5T Cybercrime https://cybersecurityventures.com/hackerpocalypse-cybercrime-report-2016/ (2016)

Global cybercrime costs: $3T (2015) → $6T (2021) → $10.5T (2025 projected) 15% annual growth rate If measured as country, would be 3rd largest economy after US and China Greatest transfer of economic wealth in history Note: More profitable than global trade of all major illegal drugs combined. Includes data theft, productivity loss, IP theft, fraud Additional sources: <https://cybersecurityventures.com/hackerpocalypse-cybercrime-report-2016/> | https://www.boisestate.edu/cybersecurity/2022/06/16/cybercrime-to-cost-the-world-10-5-trillion-annually-by-2025/

.

54.

Bolt, J. & Zanden, J. L. van.

Maddison project database 2020. (2020)

Historical GDP per capita estimates from year 1 to present. Global GDP per capita in 1900: approximately 1,260 in 1990 international dollars (roughly 3,150 in 2024 USD after PPP and inflation adjustment). Standard reference for long-run comparative economic history.

55.

Applied Clinical Trials. Global government spending on interventional clinical trials: $3-6 billion/year.

Applied Clinical Trials https://www.appliedclinicaltrialsonline.com/view/sizing-clinical-research-market Estimated range based on NIH ( $0.8-5.6B), NIHR ($1.6B total budget), and EU funding ( $1.3B/year). Roughly 5-10% of global market. Additional sources: https://www.appliedclinicaltrialsonline.com/view/sizing-clinical-research-market | https://www.thelancet.com/journals/langlo/article/PIIS2214-109X(20)30357-0/fulltext

.

59.

United Nations Department of Economic and Social Affairs, Population Division.

World population prospects 2024: Summary of results. (2024)

The 2024 Revision of the World Population Prospects provides population estimates and projections for 237 countries or areas. Global median age approximately 30.5 years in 2024, reflecting population-weighted average across all regions.

62.

Estimated from major foundation budgets and activities. Nonprofit clinical trial funding estimate.

Nonprofit foundations spend an estimated $2-5 billion annually on clinical trials globally, representing approximately 2-5% of total clinical trial spending.

63.

ICAN. Global nuclear weapon maintenance cost: $100 billion/year.

ICAN: Global Spending $100B 2024 https://www.icanw.org/global_spending_on_nuclear_weapons_topped_100_billion_in_2024 (2024)

2024: >$100 billion ($190,151/minute) - 11% increase ($9.9B) from 2023 Nine nuclear-armed states: China, France, India, Israel, N. Korea, Pakistan, Russia, UK, US US: $56.8B (more than all other 8 states combined); China: $12.5B; UK: $10B (+26% YoY, biggest increase) Historical trend: $72.9B (2019) → $82.4B (2021) → >$100B (2024) Private sector contracts: $463B ongoing; $42.5B earned from contracts in 2024 alone Note: $100B/year figure accurate for 2024. Rapid growth from $73B (2019). US spends more than rest of world combined on nuclear weapons Additional sources: https://www.icanw.org/global_spending_on_nuclear_weapons_topped_100_billion_in_2024 | https://www.icanw.org/the_cost_of_nuclear_weapons

.

64.

Industry reports: IQVIA. Global pharmaceutical r&d spending.

Total global pharmaceutical R&D spending is approximately $300 billion annually. Clinical trials represent 15-20% of this total ($45-60B), with the remainder going to drug discovery, preclinical research, regulatory affairs, and manufacturing development.

65.

UN. Global population reaches 8 billion.

UN: World Population 8 Billion Nov 15 2022 https://www.un.org/en/desa/world-population-reach-8-billion-15-november-2022 (2022)

Milestone: November 15, 2022 (UN World Population Prospects 2022) Day of Eight Billion" designated by UN Added 1 billion people in just 11 years (2011-2022) Growth rate: Slowest since 1950; fell under 1% in 2020 Future: 15 years to reach 9B (2037); projected peak 10.4B in 2080s Projections: 8.5B (2030), 9.7B (2050), 10.4B (2080-2100 plateau) Note: Milestone reached Nov 2022. Population growth slowing; will take longer to add next billion (15 years vs 11 years) Additional sources: https://www.un.org/en/desa/world-population-reach-8-billion-15-november-2022 | https://www.un.org/en/dayof8billion | https://en.wikipedia.org/wiki/Day_of_Eight_Billion

.

66.

Harvard Kennedy School. 3.5% participation tipping point.

Harvard Kennedy School https://www.hks.harvard.edu/centers/carr/publications/35-rule-how-small-minority-can-change-world (2020)

The research found that nonviolent campaigns were twice as likely to succeed as violent ones, and once 3.5% of the population were involved, they were always successful. Chenoweth and Maria Stephan studied the success rates of civil resistance efforts from 1900 to 2006, finding that nonviolent movements attracted, on average, four times as many participants as violent movements and were more likely to succeed. Key finding: Every campaign that mobilized at least 3.5% of the population in sustained protest was successful (in their 1900-2006 dataset) Note: The 3.5% figure is a descriptive statistic from historical analysis, not a guaranteed threshold. One exception (Bahrain 2011-2014 with 6%+ participation) has been identified. The rule applies to regime change, not policy change in democracies. Additional sources: https://www.hks.harvard.edu/centers/carr/publications/35-rule-how-small-minority-can-change-world | https://www.hks.harvard.edu/sites/default/files/2024-05/Erica%20Chenoweth_2020-005.pdf | https://www.bbc.com/future/article/20190513-it-only-takes-35-of-people-to-change-the-world | https://en.wikipedia.org/wiki/3.5%25_rule

.

67.

International IDEA.

International IDEA voter turnout database world export. (2026)

Best current register-based estimate of global registered voters. Sum of the latest available country-level Registration counts in International IDEA’s world export on 2026-04-22 = 4,128,142,495 registered voters across 199 countries and political entities. Methodology notes that Registration is the number of names on the voters’ register as reported by electoral management bodies, and comparability is imperfect because voter rolls and registration systems differ across countries. Additional sources: https://www.idea.int/data-tools/data/voter-turnout-database | https://www.idea.int/data-tools/export?type=region_only&themeId=293&world=all&loc=home

.

69.

Federation of American Scientists. World nuclear forces.

Federation of American Scientists https://fas.org/issues/nuclear-weapons/status-world-nuclear-forces/ (2024)

As of early 2025, we estimate that the world’s nine nuclear-armed states possess a combined total of approximately 12,241 nuclear warheads. Additional sources: https://fas.org/issues/nuclear-weapons/status-world-nuclear-forces/

.

70.

NHGRI. Human genome project and CRISPR discovery.

NHGRI https://www.genome.gov/11006929/2003-release-international-consortium-completes-hgp (2003)

Your DNA is 3 billion base pairs Read the entire code (Human Genome Project, completed 2003) Learned to edit it (CRISPR, discovered 2012) Additional sources: https://www.genome.gov/11006929/2003-release-international-consortium-completes-hgp | https://www.nobelprize.org/prizes/chemistry/2020/press-release/

.

71.

PMC. Only 12% of human interactome targeted.

PMC https://pmc.ncbi.nlm.nih.gov/articles/PMC10749231/ (2023)

Mapping 350,000+ clinical trials showed that only 12% of the human interactome has ever been targeted by drugs. Additional sources: https://pmc.ncbi.nlm.nih.gov/articles/PMC10749231/

.

72.

WHO. ICD-10 code count ( 14,000).

WHO https://icd.who.int/browse10/2019/en (2019)

The ICD-10 classification contains approximately 14,000 codes for diseases, signs and symptoms. Additional sources: https://icd.who.int/browse10/2019/en

.

73.

Wikipedia. Longevity escape velocity (LEV) - maximum human life extension potential.

Wikipedia: Longevity Escape Velocity https://en.wikipedia.org/wiki/Longevity_escape_velocity Longevity escape velocity: Hypothetical point where medical advances extend life expectancy faster than time passes Term coined by Aubrey de Grey (biogerontologist) in 2004 paper; concept from David Gobel (Methuselah Foundation) Current progress: Science adds 3 months to lifespan per year; LEV requires adding >1 year per year Sinclair (Harvard): "There is no biological upper limit to age" - first person to live to 150 may already be born De Grey: 50% chance of reaching LEV by mid-to-late 2030s; SENS approach = damage repair rather than slowing damage Kurzweil (2024): LEV by 2029-2035, AI will simulate biological processes to accelerate solutions George Church: LEV "in a decade or two" via age-reversal clinical trials Natural lifespan cap: 120-150 years (Jeanne Calment record: 122); engineering approach could bypass via damage repair Key mechanisms: Epigenetic reprogramming, senolytic drugs, stem cell therapy, gene therapy, AI-driven drug discovery Current record: Jeanne Calment (122 years, 164 days) - record unbroken since 1997 Note: LEV is theoretical but increasingly plausible given demonstrated age reversal in mice (109% lifespan extension) and human cells (30-year epigenetic age reversal) Additional sources: https://en.wikipedia.org/wiki/Longevity_escape_velocity | https://pmc.ncbi.nlm.nih.gov/articles/PMC423155/ | https://www.popularmechanics.com/science/a36712084/can-science-cure-death-longevity/ | https://www.diamandis.com/blog/longevity-escape-velocity

.

74.

OpenSecrets. Lobbyist statistics for washington d.c.

OpenSecrets: Lobbying in US https://en.wikipedia.org/wiki/Lobbying_in_the_United_States Registered lobbyists: Over 12,000 (some estimates); 12,281 registered (2013) Former government employees as lobbyists: 2,200+ former federal employees (1998-2004), including 273 former White House staffers, 250 former Congress members & agency heads Congressional revolving door: 43% (86 of 198) lawmakers who left 1998-2004 became lobbyists; currently 59% leaving to private sector work for lobbying/consulting firms/trade groups Executive branch: 8% were registered lobbyists at some point before/after government service Additional sources: https://en.wikipedia.org/wiki/Lobbying_in_the_United_States | https://www.opensecrets.org/revolving-door | https://www.citizen.org/article/revolving-congress/ | https://www.propublica.org/article/we-found-a-staggering-281-lobbyists-whove-worked-in-the-trump-administration

.

75.

MDPI Vaccines. Measles vaccination ROI.

MDPI Vaccines https://www.mdpi.com/2076-393X/12/11/1210 (2024)

Single measles vaccination: 167:1 benefit-cost ratio. MMR (measles-mumps-rubella) vaccination: 14:1 ROI. Historical US elimination efforts (1966-1974): benefit-cost ratio of 10.3:1 with net benefits exceeding USD 1.1 billion (1972 dollars, or USD 8.0 billion in 2023 dollars). 2-dose MMR programs show direct benefit/cost ratio of 14.2 with net savings of $5.3 billion, and 26.0 from societal perspectives with net savings of $11.6 billion. Additional sources: https://www.mdpi.com/2076-393X/12/11/1210 | https://www.tandfonline.com/doi/full/10.1080/14760584.2024.2367451

.

79.

U.S. Government Accountability Office.

Electronic Health Records: First Year of CMS’s Incentive Programs Shows Opportunities to Improve Processes to Verify Providers Met Requirements.

https://www.gao.gov/products/gao-12-481 (2012).

84.

Calculated from Orphanet Journal of Rare Diseases (2024). Diseases getting first effective treatment each year.

Calculated from Orphanet Journal of Rare Diseases (2024) https://ojrd.biomedcentral.com/articles/10.1186/s13023-024-03398-1 (2024)

Under the current system, approximately 10-15 diseases per year receive their FIRST effective treatment. Calculation: 5% of 7,000 rare diseases ( 350) have FDA-approved treatment, accumulated over 40 years of the Orphan Drug Act = 9 rare diseases/year. Adding 5-10 non-rare diseases that get first treatments yields 10-20 total. FDA approves 50 drugs/year, but many are for diseases that already have treatments (me-too drugs, second-line therapies). Only 15 represent truly FIRST treatments for previously untreatable conditions.

85.

NIH. NIH budget (FY 2025).

NIH https://www.nih.gov/about-nih/organization/budget (2024)

The budget total of $47.7 billion also includes $1.412 billion derived from PHS Evaluation financing... Additional sources: https://www.nih.gov/about-nih/organization/budget | https://officeofbudget.od.nih.gov/

.

86.

Bentley et al. NIH spending on clinical trials: 3.3%.

Bentley et al. https://pmc.ncbi.nlm.nih.gov/articles/PMC10349341/ (2023)

NIH spent $8.1 billion on clinical trials for approved drugs (2010-2019), representing 3.3% of relevant NIH spending. Additional sources: https://pmc.ncbi.nlm.nih.gov/articles/PMC10349341/ | https://catalyst.harvard.edu/news/article/nih-spent-8-1b-for-phased-clinical-trials-of-drugs-approved-2010-19-10-of-reported-industry-spending/

.

87.

PMC. Standard medical research ROI ($20k-$100k/QALY).

PMC: Cost-effectiveness Thresholds Used by Study Authors https://pmc.ncbi.nlm.nih.gov/articles/PMC10114019/ (1990)

Typical cost-effectiveness thresholds for medical interventions in rich countries range from $50,000 to $150,000 per QALY. The Institute for Clinical and Economic Review (ICER) uses a $100,000-$150,000/QALY threshold for value-based pricing. Between 1990-2021, authors increasingly cited $100,000 (47% by 2020-21) or $150,000 (24% by 2020-21) per QALY as benchmarks for cost-effectiveness. Additional sources: https://pmc.ncbi.nlm.nih.gov/articles/PMC10114019/ | https://icer.org/our-approach/methods-process/cost-effectiveness-the-qaly-and-the-evlyg/

.

88.

Xia et al., Nature Food. Nuclear winter famine.

Xia et al. https://www.nature.com/articles/s43016-022-00573-0 (2022)

We estimate that a nuclear war between the United States and Russia would produce 150 Tg of soot and lead to 5 billion people dying at the end of year 2. Additional sources: https://www.nature.com/articles/s43016-022-00573-0

.

89.

Manhattan Institute. RECOVERY trial 82× cost reduction.

Manhattan Institute: Slow Costly Trials https://manhattan.institute/article/slow-costly-clinical-trials-drag-down-biomedical-breakthroughs RECOVERY trial: $500 per patient ($20M for 48,000 patients = $417/patient) Typical clinical trial: $41,000 median per-patient cost Cost reduction: 80-82× cheaper ($41,000 ÷ $500 ≈ 82×) Efficiency: $50 per patient per answer (10 therapeutics tested, 4 effective) Dexamethasone estimated to save >630,000 lives Additional sources: https://manhattan.institute/article/slow-costly-clinical-trials-drag-down-biomedical-breakthroughs | https://pmc.ncbi.nlm.nih.gov/articles/PMC9293394/

.

90.

Trials. Patient willingness to participate in clinical trials.