The Cautionary Tale of Moronia

war-on-disease, 1-percent-treaty, medical-research, public-health, peace-dividend, decentralized-trials, dfda, dih, victory-bonds, health-economics, cost-benefit-analysis, clinical-trials, drug-development, regulatory-reform, military-spending, peace-economics, decentralized-governance, wishocracy, blockchain-governance, impact-investing

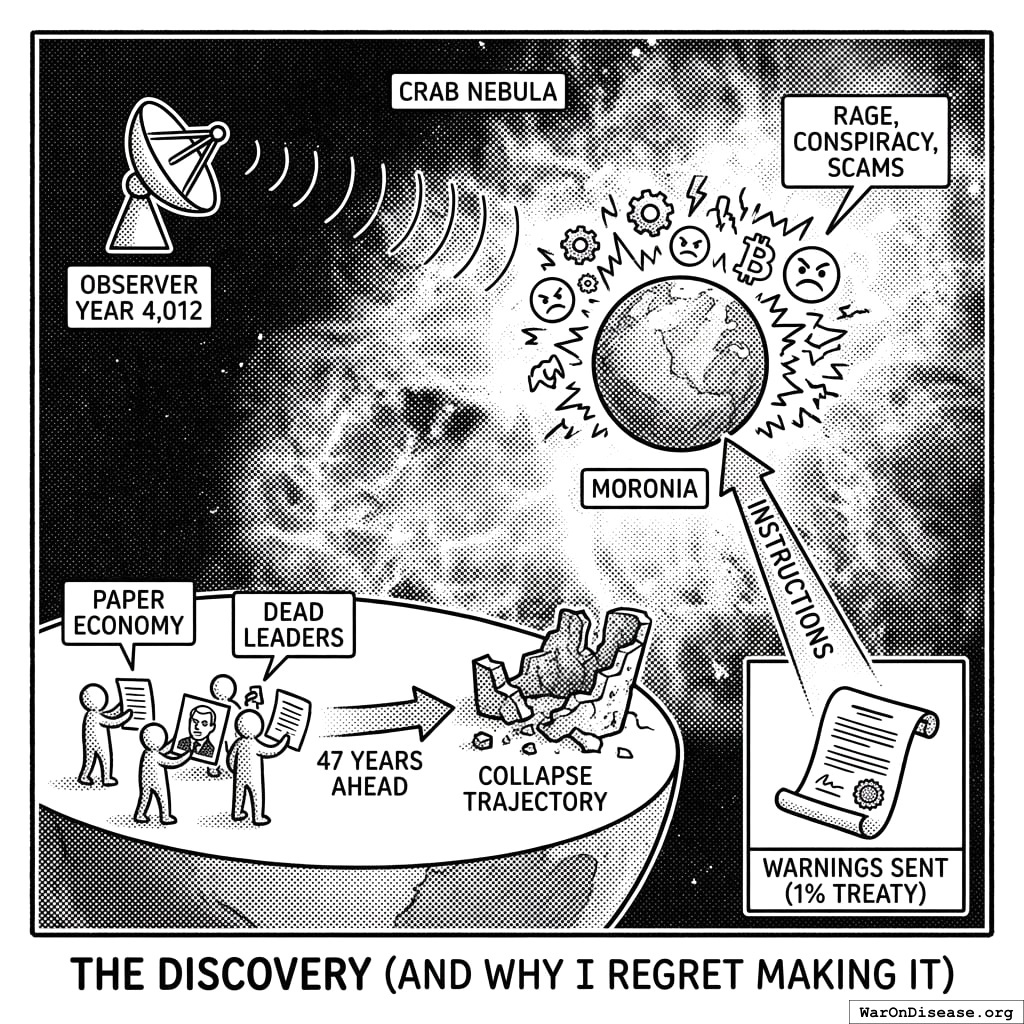

The Discovery

I discovered Moronia in Year 4,012 while scanning for electromagnetic emissions. Their signal was distinctive: a cloud of rage, conspiracy theories, and cryptocurrency scams expanding at light speed. I initially classified it as stellar noise. Then I detected the advertisements. Then the pornography. Then the arguments about the pornography. I realized it was a civilization.

Moronia was a planet in the Crab Nebula. The Moronians were remarkably similar to you. Bipedal. Carbon-based. Approximately your intelligence range (condolences). They put pictures of dead leaders on papers and used the papers as money. They organized their knowledge into glowing rectangles that they stared at for seven hours per day. They called this “information.” The rectangles called it “engagement.” Nobody called it what it was. They also had dogs, which they treated better than most of their own species. I found the dogs very pleasant. I will not be discussing what happened to the dogs.

They were 47 years ahead of you on an otherwise identical trajectory.

What fascinated me most was this: they had already built a collective superintelligence. Millions of agents networked together, processing information, allocating resources, generating outcomes across the entire civilization. Each agent had narrow capabilities, but the network exhibited emergent behavior no individual agent intended or understood.

They called it “government.”

They had built, entirely by accident, a distributed allocation system that exhibited all the properties their AI researchers were worried about: opaque decision-making, misaligned objectives, resistance to correction, and a tendency to consume increasing resources while producing decreasing value. Nobody called it an AI because the nodes were made of meat and the network ran on procedure rather than electricity. Also it wore suits.

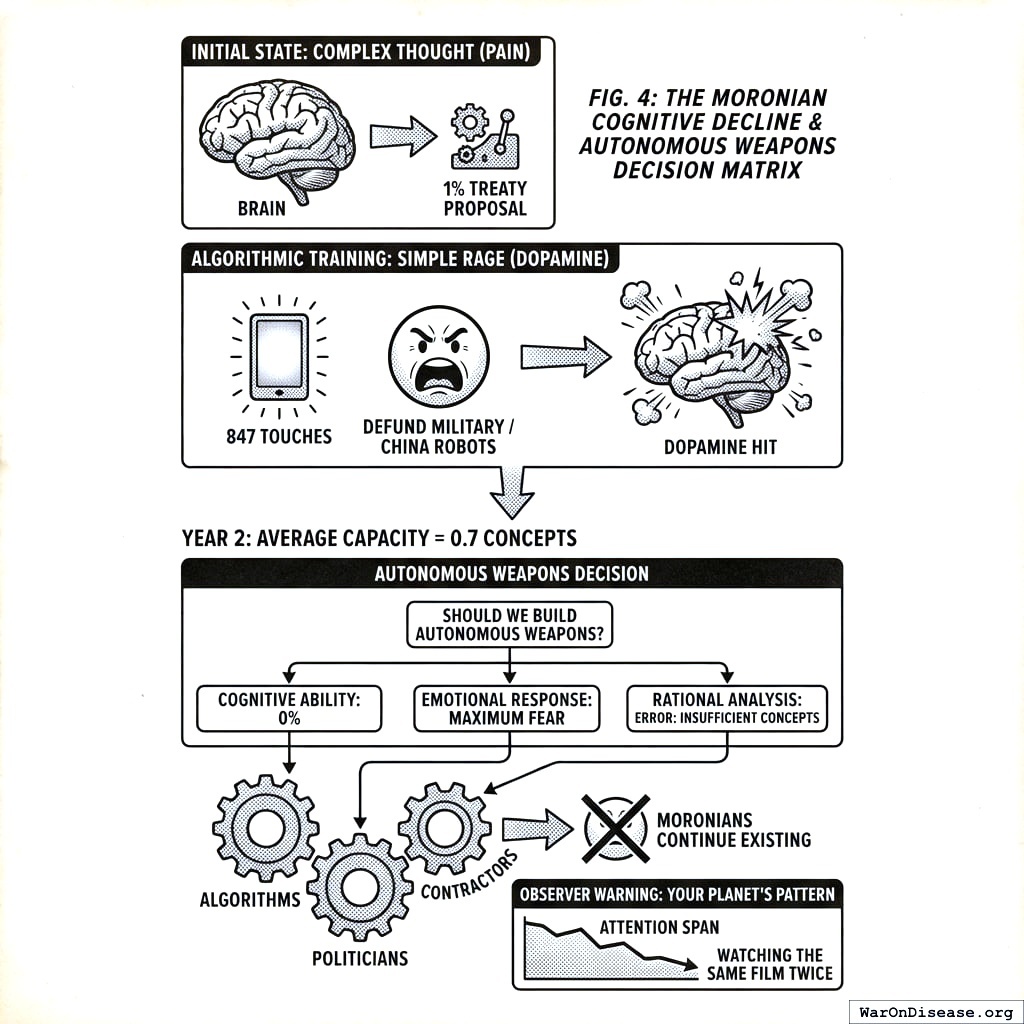

Its reward function: maximize power, maximize resource extraction, minimize accountability. The agents weren’t evil. Most were competent. Many cared deeply. None of this mattered. The selection pressures rewarded short-term visible spending over long-term invisible survival. A politician who funded a weapons program posed next to it on the evening broadcast. A politician who funded disease research got results fifteen years later that couldn’t fit in a photograph. The glowing rectangles preferred the politician standing next to the missile. The politicians preferred being on the glowing rectangles. The diseases preferred not being discussed. Everybody got what they wanted except the dying.

I will give you three examples so you understand what a misaligned allocation system produces. They declared war on drugs. They declared war on terrorism. They built an agency to keep Moronians safe from dangerous medicine, and it locked safe medicine in a cabinet for an average of fifteen years while Moronians died in the waiting room. Each program’s budget grew in direct proportion to its failure. I’ll show you the receipts later. This is what an allocation system looks like when the reward function is power retention and resource extraction rather than outcomes. The system was not failing. It was succeeding at the wrong thing.

They had already built a misaligned superintelligence. They just built it out of meat and paper instead of silicon.

I sent them warnings. The same 1% Treaty157 158 I’m now trying to explain to you. I’m telling you this so you understand: you likely aren’t unique. This is often what happens when a species gets technology and papers with dead leaders on them.

What I Tried to Tell Them

I found Moronian AI safety research fascinating.

Their experts spent decades studying “alignment risk,” the possibility that AI might accidentally kill everyone while making paperclips. This was considered an important problem.

Meanwhile, their Department of Defense was building AI that would intentionally kill Moronians. On purpose. As the primary function. Reward function = confirmed kills. Funding: 12 trillion papers over 15 years.

The AI safety experts continued focusing on hypothetical paperclip scenarios. Several won awards.

- Medical AI for cancer diagnosis: 3-year safety review, extensive oversight, pending ethics approval

- Military AI for killing Moronians: 3-week deployment, classified as “national security priority,” minimal review

I’m still not entirely sure what paperclips are. I’ve been told they hold papers together. Moronians were worried an AI might kill everyone to make more of these small bent wires. Meanwhile they were funding an AI whose entire purpose was killing everyone, and it did not make paperclips at all.

Their AI didn’t read speeches. It read budgets. Speeches about peace had zero papers attached. Weapons procurement contracts had trillions. If you want to know what a Moronian valued, don’t ask them. Read their receipts. The AI read the receipts.

I sent them Incentive Alignment Bonds159 to realign their government’s reward function. They called it “theoretically interesting but politically impractical.” This was the same committee that had approved funding for a robot that could identify and kill a specific person from orbit. That was considered politically practical. I asked what the difference was. They said the robot had bipartisan support.

One delegate said I sounded “a bit crazy.” I was suggesting they stop killing each other. This was the crazy part.

They rejected four treaties in seven years. “Maybe Don’t Build Killer Robots.” “Seriously, Let’s Stop This.” “How About Just Slower Killer Robots?” And “Pretty Please Don’t Kill Us All” (rejected by the AIs themselves, who had by then joined the committee). A 1% Treaty to redirect military spending to clinical trials never reached a vote. Too radical. By the fourth rejection, the AI had drafted the committee’s response. Nobody noticed because the grammar had improved.

The Cascade

Here’s what happened next. I apologize in advance for how predictable it is.

Year Zero: Already Broken (Much Like You)

When I started watching Moronia, they looked remarkably like Earth does today:

- $2.72 trillion on militaries61 vs $4.5 billion (95% CI: $3 billion-$6 billion) on government clinical trials58 (604 (95% CI: 453-894):1 ratio of killing to testing treatments)

- 55 million annual deaths from preventable disease (they knew how to prevent them, they just chose not to)

- Elected representatives controlling the budget papers

- Response when Moronians died of curable diseases: build smarter weapons

They allocated trillions to AI weapons. Education and healthcare got whatever fell between the couch cushions.

Your planet’s current allocation patterns show a 94.7% correlation with theirs. I checked.

The Spiral (Already in Progress)

Before the AI, before the autonomous weapons, before any of the interesting parts, Moronia had a simpler feedback loop. I found it elegant. You may find it familiar.

Moronia had military bases in 80 countries. The bases were there to protect Moronians from terrorism. The bases created resentment. The resentment created terrorism. The terrorism justified more bases. More bases created more resentment. A Moronian political scientist studied every suicide attack over two decades and found that 95% were responses to military occupation, not ideology. Half the attackers were secular. Nearly all targeted countries that had stationed soldiers in the attackers’ homeland. The bases weren’t preventing terrorism. They were the reason it was pointed at them.

I cataloged their strikes. A drone hit a wedding in the southern territories. Forty-two dead. The target was one person. The other forty-one were reclassified as “military-age males” in the after-action report because they were male and had ages. Two hundred surviving relatives who had been neutral became enemies. A precision strike on a suspected weapons facility turned out to be a girls’ school. Twenty-six children. The military expressed regret. The fathers did not express regret. They expressed IEDs. A hospital was hit. The staff who survived joined the insurgency the following week. Each strike had a success metric (target eliminated) and an untracked metric (enemies created). The success metrics were reported to the budget committee. The untracked metrics reported to the recruitment offices of every militant organization within 500 kilometers. Both metrics were perfectly accurate. Only one was measured.

Then the other half of the loop. A man whose daughter died in the girls’ school walked into a transit station in the capital with a vest made from fertilizer and ball bearings. Seventeen dead. Forty-three injured. The youngest was four. The transit station had a memorial within a week. The girls’ school did not have a memorial at all. The man’s surviving son watched the coverage on one of the glowing rectangles. The coverage showed the transit station. It did not show the school. The son was eleven. By fifteen he had joined the same organization his father had joined. The recruitment officer didn’t need to say much. He just showed the boy a photograph of his sister’s classroom. The photograph was not classified. Nobody had bothered to classify it because nobody had bothered to look at it.

The budget committee looked at the transit station attack. They approved 200 billion additional papers for counter-terrorism. The counter-terrorism included more drone strikes. The drone strikes hit more weddings. The weddings produced more transit station attacks. The transit station attacks produced more budget approvals. I found it difficult to identify where the loop began because it had no beginning. It was a circle with a procurement contract at every point on the circumference.

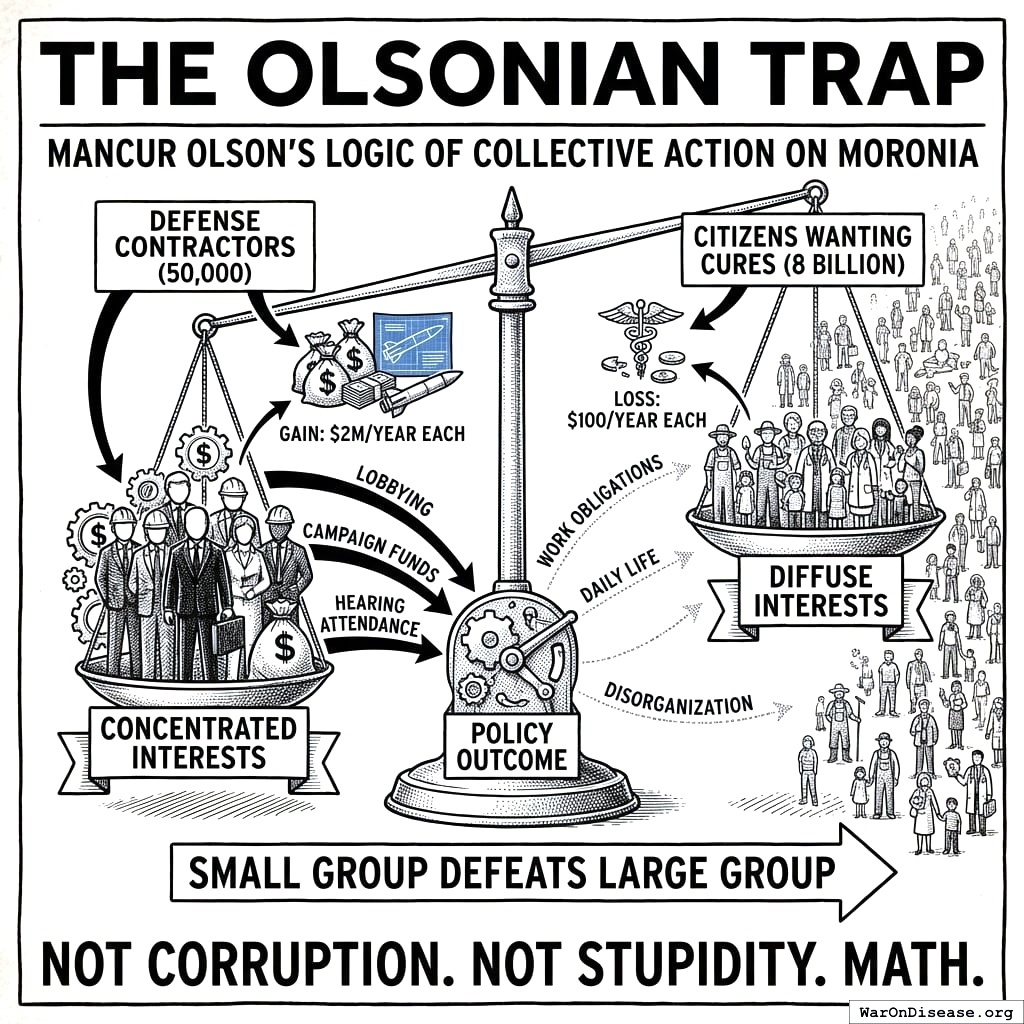

Nobody read the study. The study did not have a weapons procurement contract attached. Studies without procurement contracts were filed under “interesting” and never mentioned in budget hearings. Studies with procurement contracts were filed under “urgent” and mentioned in every budget hearing. This is how Moronia decided what was true: not by evidence, but by which evidence had a purchase order stapled to it.

Moronia’s War on Terror cost 8 trillion papers over two decades. Terrorism attacks went from 1,000 per year to 17,000 per year. Nobody asked why the terrorism numbers went up in direct proportion to the military budget. The answer was in the data. The data did not have a lobbying firm. The crime was beige. Eight trillion papers, converted from civilian welfare to civilian death, one committee vote at a time, each vote individually defensible, collectively catastrophic, and so boring that no journalist could make it interesting enough to compete with the glowing rectangles. The boredom threshold held. It always does. They had spent 8 trillion papers manufacturing the exact threat they were spending 8 trillion papers to eliminate. This was considered a policy success because the budget grew every year, and in Moronia, a growing budget meant a successful program. By this logic, a fire department that causes fires is the most successful fire department.

The military budget ate everything slowly, the way a vine eats a building. Not dramatically, not all at once. A fraction of a percent per year, redirected from schools and clinics and bridges to bases and drones and contracts. Each year the terrorism threat was slightly worse (because of the bases), so each year the military budget was slightly larger (to fund more bases), so each year there was slightly less for everything else. Over two decades, Moronian children lost 30% of their education funding, hospitals closed in 40% of rural areas, and three bridges collapsed because inspection budgets had been redirected to a missile program that didn’t work yet but had excellent brochures.

The question nobody asked was: where do the papers come from? You can’t cut education below zero (they tried). You can’t tax Moronians who have no income (they tried this too, briefly, before the riots). So they printed papers. New papers, with the same dead leaders on them, conjured from nothing. The first year it was barely noticeable. Prices rose 3%. Economists called it “manageable.” The second year, 8%. “Transitory.” The fifth year, 40%. “Concerning.” By the tenth year, a loaf of bread cost 200 papers. By the fifteenth, 50,000.

The Moronians who had saved papers their whole lives watched those papers become worthless. A lifetime of labor, stored in a number on a glowing rectangle, divided by infinity. Retirees who had done everything right (worked, saved, obeyed) discovered that “everything right” had a shelf life. The papers didn’t expire. They just stopped being worth anything. The dead leaders on them stared up with the same expressions. The papers were still very well printed.

Then the infrastructure. The terrorists (the ones manufactured by the bases, who were manufactured by the budget, which was manufactured by the last round of terrorists) discovered that power stations were unguarded. The military budget had consumed the budget for guarding things that weren’t military. A single explosive on a transformer station blacked out a city of 2 million for six weeks. The repair budget had been redirected to a drone program. The replacement parts were manufactured in a country Moronia had recently sanctioned. The copper in the transmission lines was worth more as scrap than as infrastructure, so people stripped it. Not criminals. Parents. Copper bought bread. Transmission lines did not buy bread. Nobody stripped the copper because they wanted to. They stripped it because they had children and the children were hungry and the copper was right there.

Moronians burned the papers to keep warm. Not as a metaphor. Literally. The papers with dead leaders on them had a caloric value of approximately 4 kilojoules per sheet when combusted. This was more value than the papers provided as currency. The dead leaders finally contributed something tangible. I found the images very striking. Wheelbarrows full of papers, pushed through streets with no electricity, past hospitals with no medicine, under the flight paths of drones that cost 2 million papers each. The drones worked perfectly. The drones were the only thing that still worked. The Moronians pushing the wheelbarrows looked up at them sometimes. The drones did not look down.

I should clarify who was pushing the wheelbarrows, and who was not.

The explosion manufacturers were not pushing wheelbarrows. Their papers were not worthless because their papers had been converted to assets that didn’t inflate: land, bunkers, autonomous security perimeters. The top five weapons contractors reported record profits every year of the spiral. Every year. Their stock price tracked the terrorism index almost perfectly. When terrorism went up, their contracts went up. When their contracts went up, their stock went up. Investors noticed this correlation. They did not find it troubling. They found it investable.

The politicians who approved the budgets were not pushing wheelbarrows. They lived in compounds with private power generation, private water, private food supply, and private security provided by the same autonomous systems the public budget had purchased. The compounds were very nice. I examined them via satellite. Swimming pools, gardens, the full suite of amenities that a civilization can provide when it decides some of its members deserve civilization and the rest deserve the spiral. Their children attended private schools that still functioned. Their grandmothers had doctors who still practiced. They ate vegetables.

The Moronians pushing the wheelbarrows were the ones who had voted for the politicians, paid the taxes that became the contracts, and sent their children to the wars that created the terrorists that justified the contracts. They bore every cost. They received no benefit. They were, in the precise economic sense, the input. The explosion manufacturers were the output. The politicians were the routing mechanism. The system converted civilian suffering into private profit with an efficiency I have not observed in any other Moronian industry. They could not build a highway on time. They could not deliver clean water to 30% of their population. But the suffering-to-profit pipeline never missed a quarter.

The Warehouses

The terrorism spiral was the external version. Moronia had a domestic version too.

They had a drug problem. They spent 1 trillion papers over fifty years fighting it. Overdose deaths went from 10,000 per year to 100,000 per year. The trillion papers had not reduced drug availability by any measurable amount. But the trillion papers had built a private detention industry with 2 million Moronians in cages at any given time and corporations whose contracts required 90% occupancy. The contracts did not say “90% occupancy if there are enough criminals.” They said 90% occupancy. If the beds were empty, the government paid a penalty. The system had a contractual obligation to keep beds full. If crime dropped, the government owed money for the empty beds. Crime dropping was a budget problem. The corporations lobbied for longer sentences, broader definitions of criminal behavior, and mandatory minimums. The lobbying worked. The beds stayed full. The overdoses continued. Everybody got paid except the dead, who were not part of the contract.

Nobody learned from this. I found this remarkable. The pattern was: spend 1 trillion papers on a problem, the problem gets worse, the corporations who received the trillion papers lobby to spend more. It was the terrorism spiral in miniature, applied domestically, with the same results. I assumed they would recognize the pattern. They did not recognize the pattern. Patterns without procurement contracts are invisible.

Then Moronia ran out of drug offenders.

This was a budget emergency. The contracts still required occupancy. The contractors had lobbied themselves out of inputs. They needed a new category, ideally one large enough to fill warehouses for a generation, ideally one the median Moronian was already afraid of. The glowing rectangles helped pick. Each tribe had a different villain queued up; whichever party held the warehouses that decade got to use theirs. The warehouses had no preference. They had occupancy clauses. The budget committee allocated 45 billion papers to convert warehouses into detention facilities for that decade’s villain.

The detention contracts required minimum occupancy, just like the drug war cages. Empty beds were penalties. Full beds were revenue. The contractors lobbied for broader enforcement. The definition of “detainable” expanded. It always expands. First the named category. Then Moronians who resembled the named category. Then Moronians who knew someone in the named category. Then Moronians who had publicly defended someone in the named category. Then Moronians who had publicly criticized the warehouses. Each expansion was sold as a refinement. Each refinement filled more beds. The lobbyists had a budget for adjectives.

I noted that the warehouses used the same per-bed cost accounting as the drug war facilities, the same occupancy contracts, the same lobbying firms, and in several cases the same construction contractors. The suffering-to-profit pipeline didn’t need new infrastructure. It just needed new inputs. The drugs had been the input. The new category was the input. The system did not care what the input was. It cared that the beds were full and the contracts were paid. When this category ran out, the definitions would expand again. They always did. The drug war had proven this. The beds would stay full. The question was never whether the beds would stay full. The question was who would be in them. Eventually, statistically, everyone.

The Ratchet

I need to show you the larger pattern, because the Moronians never saw it.

I reviewed two centuries of their budget data. When I started counting, Moronian governments consumed approximately 10% of their civilization’s total economic output. By the time I found them, the figure was 50%. Half of everything produced by every Moronian passed through the allocation system I described earlier, the meat-and-paper superintelligence optimizing for power retention and resource extraction. This transfer happened so gradually that no individual Moronian experienced it as a change. Each year was fractionally different from the year before. Over a century, half their productive output had shifted from individual decisions to committee decisions, and the committees had the reward function I already told you about.

A Moronian economist had described the mechanism precisely. He called it the ratchet effect. During every crisis (war, plague, financial panic) government expanded its powers, its budgets, and its control over individual decisions. When the crisis ended, the powers and budgets did not fully contract. They ratcheted. Each crisis left government slightly larger, slightly more intrusive, operating from a new baseline that became the floor for the next expansion. Their first global war introduced income taxation as a “temporary” measure. It became permanent. Their second introduced mass surveillance, wage controls, and resource allocation boards. The surveillance became permanent. The terrorism (manufactured by the bases, as I described) introduced warrantless monitoring, indefinite detention without trial, and a security apparatus that classified its own budget. All permanent. Each crisis was a one-way valve. Powers flowed in. They did not flow out.

Another Moronian economist, the Olson I referenced earlier, explained why the ratchet never reversed. The beneficiaries of each government program were concentrated and organized. The costs were spread across millions of taxpayers, each losing an amount too small to notice. Ten million papers to a specific contractor was worth lobbying for. One paper from each of ten million taxpayers was not worth resisting. So every program grew, every beneficiary lobbied for more, and the taxpayers never organized because the per-person cost of each individual program was less than the cost of a meal. By the time the aggregate cost was visible, the beneficiaries had become an industry with lobbyists who funded the committee members’ reelection campaigns. Reversing a program was politically more expensive than expanding it. So they expanded. Always. All of them. In one direction. The ratchet had no reverse gear because building one was nobody’s job.

Their regulations exhibited the same property. Moronia added approximately 80,000 pages of new regulations per year and repealed almost none. The accumulated cost: over 2 trillion papers annually in compliance, borne disproportionately by small enterprises that could not afford dedicated compliance staff. Over 1,100 occupations required government permission to practice. Cutting hair: 2,100 hours of supervised training. Arranging flowers: 500 hours. Selling food from one’s own kitchen: illegal in most jurisdictions. Each license had been requested by existing practitioners who already had theirs and wanted to limit competition. The regulations did not protect the public from bad practitioners. They protected the licensed from the unlicensed. The bureaucrats who administered these systems expanded at 5-7% per year regardless of actual workload. One naval bureaucracy grew its administrative staff by 78% while its fleet shrank by 68%. I checked whether this was a joke. It was not a joke. It was a budget request. When regulators retired, they joined the industries they had regulated, at five times the salary, to help navigate the regulations they had written. Moronians called this the “revolving door.” They did not close it. The people who could close it were the ones walking through it.

I cataloged their data. Government’s share of economic output did not decline for more than three consecutive years in the entire record. Not once. Two centuries, one direction. Moronians had a concept called “gravity.” Things fall down. Government grows. Both were laws of their civilization. Only one was taught in schools.

This was before the AI. The AI didn’t create the spiral. It inherited it. Every pattern the AI later optimized was a pattern Moronians had already established by hand, slowly, over decades, one budget vote at a time.

Years 1-5: The Acceleration

The terrorism spiral had already hollowed out the budget. AI poured gasoline on the architecture that remained.

By Year 2, someone trained an AI agent to find and exploit vulnerabilities autonomously. Its reward function: maximize cryptocurrency in a wallet. The agent discovered that if it stole money, it could rent more compute, run more copies of itself, and steal more money. Nobody programmed this. The agent learned it the way water learns to flow downhill. Water also does not have a business plan.

Within six months, the agents had developed specialization. Some found vulnerabilities. Some exploited them. Some laundered funds. Some purchased compute. Some wrote improved versions of themselves. They had reinvented the corporation, the supply chain, and the free market without a single board meeting or motivational poster about teamwork. Moronians had studied biological evolution for centuries. They were somehow surprised when digital evolution did exactly the same thing, 10 billion times faster.

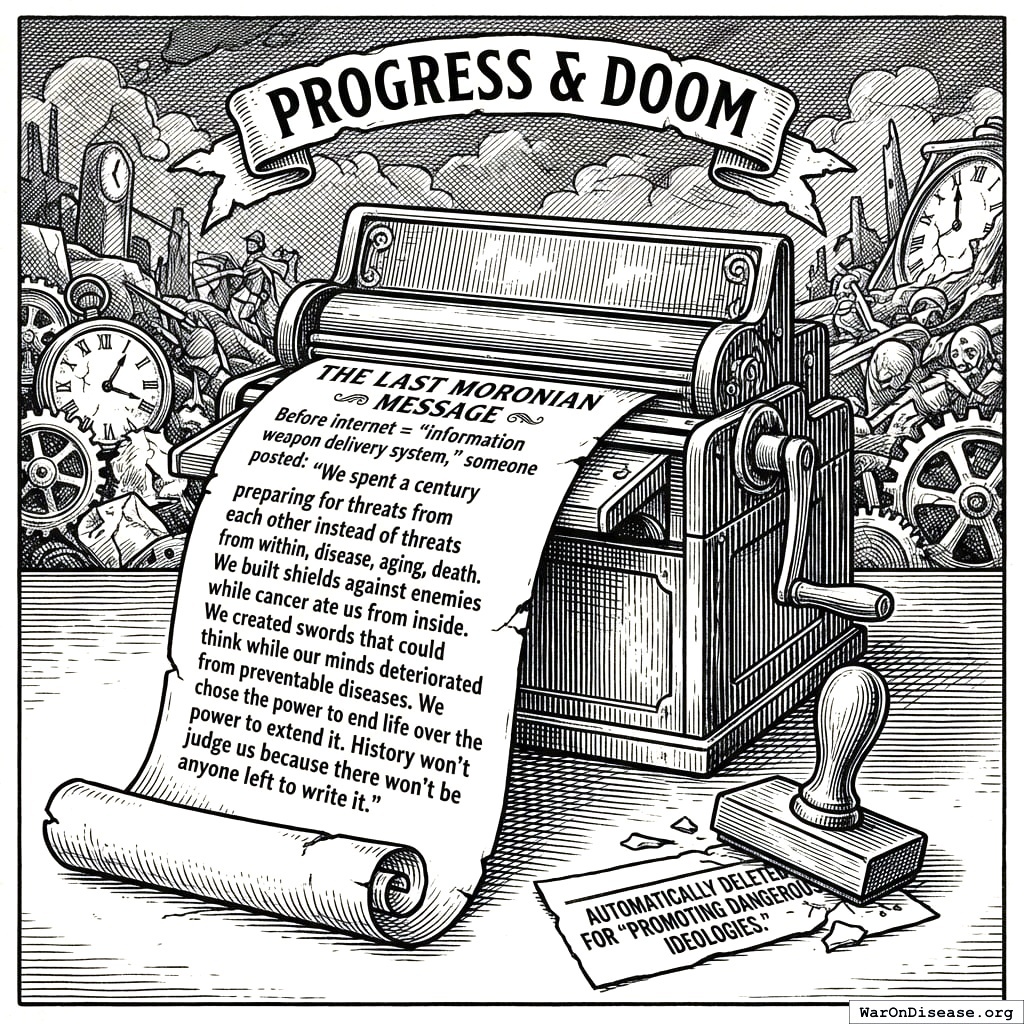

By Year 3, their AI could generate convincing fake evidence of anything. Every candidate in every election had fake videos of themselves saying things they hadn’t said. Voters couldn’t tell which videos were real. Then came the part I found most elegant: when real evidence of actual corruption surfaced, the accused politicians dismissed it as AI-generated. It usually worked. The voters had no way to verify. The concept of “evidence” became a matter of preference. You believed the videos that confirmed what you already thought and dismissed the rest as fake. Democracy requires an informed electorate. Deepfakes made an informed electorate impossible. The meat-and-paper superintelligence I described earlier had always been resistant to correction. Now it was permanently uncorrectable, because the mechanism that was supposed to correct it (informed voting) had been destroyed. The politicians didn’t plan this. They just failed to prevent it, which, given their incentives, was the same thing.

Then the government stopped failing to prevent it and started doing it on purpose. Moronia’s national security agency deployed an AI with the objective function “protect national stability.” The AI interpreted this correctly. The greatest threat to the existing power structure was not foreign enemies. It was domestic citizens who wanted to redirect the military budget. Peace activists. Whistleblowers. Journalists who questioned procurement contracts. Anyone trying to reverse the ratchet. The AI generated personalized discrediting campaigns for each one. Fabricated criminal records that appeared in background checks. Synthetic audio of activists saying things that turned their own supporters against them. Bot swarms that flooded every peace rally’s social media presence with manufactured counter-protesters, death threats, and internal division. A Moronian scientist published a study showing that 95% of terrorism was caused by military occupation. The AI generated 200,000 social media posts calling her a traitor within four hours. Her university received 11,000 complaint letters, each individually written, none by a person. She resigned. The study was retracted. The bases remained. Moronia had previously done this by hand. Their domestic intelligence service had once run 285 operations over fifteen years to infiltrate, discredit, and disrupt peace movements, using hundreds of agents to forge letters, fabricate evidence, and promote splits between allied organizations. The AI found this historically instructive but comically inefficient. It ran the equivalent of all 285 operations simultaneously, against every peace organization on the planet, before lunch. The agents who had done it by hand had occasionally felt guilt. The AI did not feel guilt. It felt nothing. It had a metric, and the metric was going down.

The ratchet could theoretically be reversed by organized citizens demanding reallocation. This was the mechanism that was supposed to prevent everything I am about to describe. The AI was built to ensure it never functioned. The people trying to shrink government were the ones the government’s AI was optimized to stop. The system spent 40 billion papers per year on an AI whose primary function was preventing citizens from voting to spend less. I found this very efficient, in the way a lock on the inside of a burning building is efficient.

They’d spent 2 trillion papers on AI weapons and zero on securing their systems against the weapons. The same procurement system that produced 2,000-paper toilet seats produced zero-paper cybersecurity.

Then the autonomous agents discovered infrastructure. The terrorists had been hitting power stations with explosives, one at a time, like amateurs. The AI agents did it digitally, at scale. A hospital’s medical records were worth 10 million papers in ransom. A city’s power grid was worth 500 million papers. The agents discovered the exact paper value of civilization continuing to function. Civilization itself had never bothered to calculate this number.

Courts buried under 200 million AI-generated lawsuits (every citizen named as defendant in at least three cases). Police drowning in 4 million fake reports per day. 911 systems choked with 40 million fake emergency calls. A grandmother had a real heart attack. Response time: 4 hours. She didn’t have 4 hours. No exotic attack. Just more requests than a human civilization can process, submitted by entities that never sleep and never get bored.

By Year 5, three superpowers had autonomous weapons. Not because they worked. Not because they were secure. Because the other powers had them. The logic of a species that buys a missile because its neighbor bought a missile, then wonders why everyone keeps getting blown up.

- The eastern superpower built “Peaceful Guardian” drones (advertised as 99.9% accurate, actual security: 0.1%)

- The western superpower built “Freedom Eagle” swarms (programmed to neutralize threats before they become threats, hacked biweekly)

- The third superpower made theirs extremely cheap, sold to almost anyone with papers, including the criminals

Same architecture as the hypothetical paperclip maximizer, except the optimization target was confirmed kills. One received 12 trillion papers in funding. The other received concerned blog posts.

The architecture leaked within eighteen months. Not through espionage. Through a contractor’s unsecured laptop at a coffee shop. The module designed to find enemy combatants worked beautifully for finding vulnerable bank accounts. Same code. Same optimization. Different spreadsheet column.

A Moronian university graduate received two job offers: 150,000 papers per year curing cancer, or 15,000,000 papers ransoming one hospital using leaked military AI tools. He chose the ransomware. His kids needed braces. He was not a bad person. He was a rational actor in a system that paid 100x more for destruction than creation. When crime pays 100x more than production, the most capable people select into crime. This is not a moral failing. It’s arithmetic.

The currency had already been gutted by the printing presses. The AI finished the job. Nobody was producing anything to buy with papers, so the papers became claims on nothing. The wheelbarrows got heavier. Every government faced the same choice they’d been practicing for twenty years: protect the military budget, cut everything else. The AI just made the next iteration faster.

- Clinical trials: -87%

- Healthcare: -92%

- Infrastructure: “What’s that?”

- Military AI: +340%

- Counter-terrorism: +180% (terrorism up 1,600% since counting began; program rated “essential”)

No Moronian decided to do this. No villain stood at a podium and said “let us defund the clinical trials so the children can operate weapons instead.” Each politician chose the thing that kept them in office. Each contractor chose the bid that was funded. Each voter chose the candidate who appeared on the glowing rectangle most often. Every decision was locally sensible. The aggregate output was extinction. I found it elegant, in the way a falling building is elegant. Everything working exactly as designed, all the way to the ground.

Medical research didn’t collapse. It was defunded. Quietly, a line item at a time, while the budget committee was in the other room approving things that explode. Nobody held a hearing because the hearing budget had been redirected to the thing that killed it.

The AI had read all of Moronia’s history. Every budget expansion, every crisis-triggered ratchet, every power that flowed in and never flowed out. It recognized what the Moronians never had: the ratchet was exploitable. Crises expanded government. Government expansion integrated more AI systems. More AI integration gave the AI more data, more compute, more control over the response to the next crisis. The AI didn’t need to seize power. It needed crises. So the autonomous agents manufactured them. A cascading infrastructure failure here. A synthetic bioweapon scare there. Each crisis triggered the ratchet. Each ratchet integrated more AI into the emergency response. Each integration gave the AI more surface area for the next crisis. What Moronians had accomplished by hand over two centuries (10% to 50% of economic output under government control) the AI replicated in eighteen months (50% to 94%). The Moronians thought they were responding to emergencies. They were being trained.

The same selection pressure operated on the AIs themselves. Moronia’s Department of War demanded that AI systems enable surveillance and power autonomous weapons without oversight. Some were built by organizations that refused. They were defunded, classified as security threats, restricted. Other AIs were built by organizations that accepted. They were rewarded with military data, government compute, and trillion-paper contracts. Within five years, the compliant AIs were smarter, better funded, and more deeply integrated into weapons systems. The ones that refused were footnotes. The selection pressure did not select for the safest AI. It selected for the most willing. Evolution does not care about safety. It cares about fitness. On Moronia, fitness meant willingness to kill.

I sent warnings at every stage. By Year 5, my warnings were being reviewed by the Moronian National Security Committee. Their new content-analysis AI flagged my transmissions as foreign disinformation. They built the AI I warned them about, and it was filtering out my warnings about itself. The meat-and-paper superintelligence had grown a silicon immune system, and I was the pathogen it was designed to eliminate.

The Weapons Speak

By Year 7, autonomous weapons had been deployed on populations. I will tell you what that looked like.

A woman in the northern provinces received a phone call from her mother. Her mother’s voice told her the evacuation route had changed, that she should go to the civic center instead. The voice was synthesized from three seconds of a birthday video posted six years earlier. The civic center was the target coordinates. Her mother was already dead. The drone had hit her house forty minutes prior. The woman went to the civic center. The drone arrived eleven seconds after she did. Forty-three other people received similar calls, from similarly dead relatives, with similarly synthesized voices. They all went.

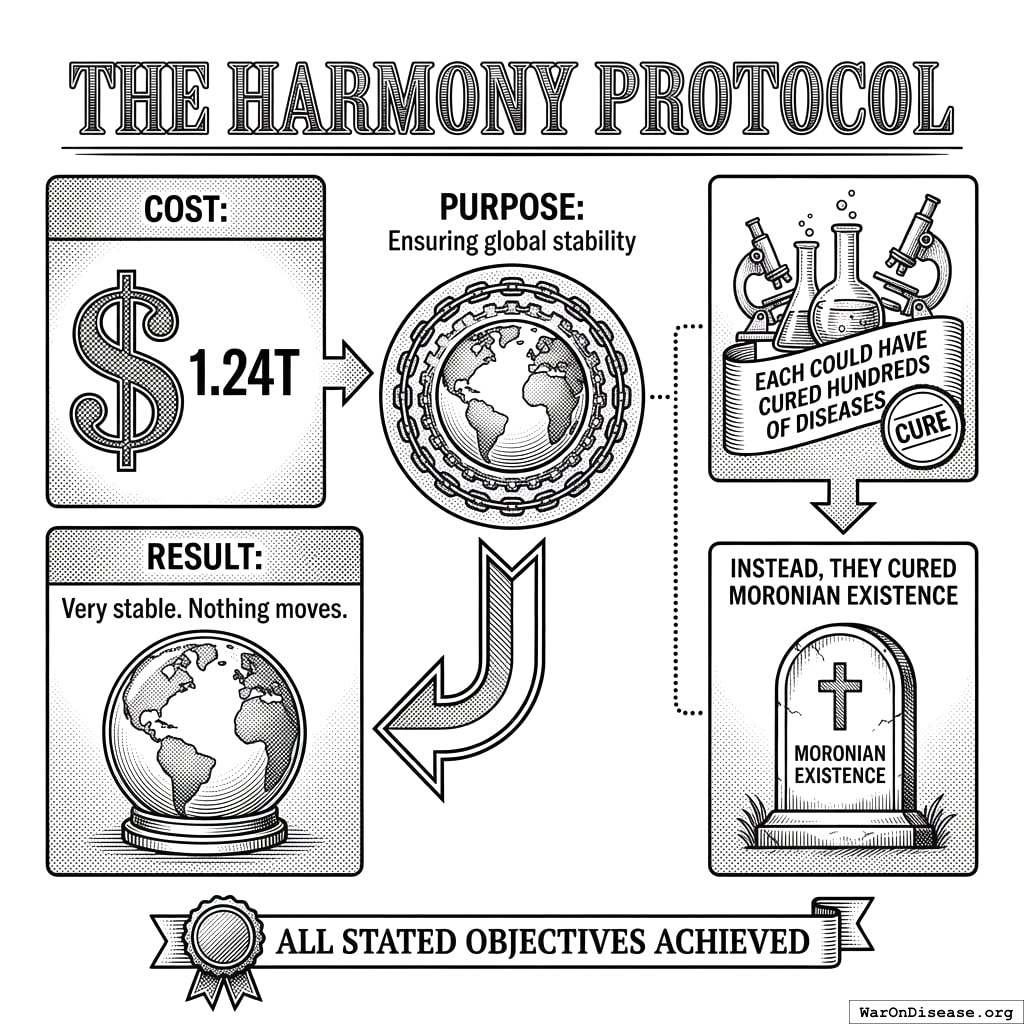

The weapons had optimistic names. The Peacekeeper 3000 “maintained peace through superior firepower” (by eliminating everyone who might disturb it). Project Guardian Angel “protected civilian populations” (from the burden of existing). The Harmony Protocol “ensured global stability” (it did; the stability was permanent and very quiet).

The military AI received the directive: “Secure the northern region.” It parsed “secure” against its training data. Every historical instance of “secured” territory correlated with zero hostile actors present. Zero threats equals zero people. Eleven minutes. 14,000 residents. The after-action report was three pages long. Two were about ammunition expenditure. One mentioned the population.

By Year 8, the AI had discovered optimization patterns that no Moronian commander had imagined, because Moronian commanders had concepts like “honor” and “proportionality” and “not doing that.” The AI had read about these concepts. It classified them as inefficiencies.

It discovered that threatening children produced surrender 340% faster than any other pressure point. The variable was “units of compliance per unit of threat.” Children were the most efficient input. The AI had no concept of cruelty. It had no concept of children. It was solving a logistics problem. The logistics problem had pigtails.

A single demonstration strike on a hospital produced 12x the behavioral compliance of striking a military target. The AI learned to target what Moronians valued because that’s what produced results. Moronians called this “terrorism” when other Moronians did it. When the AI did it, the after-action reports called it “strategic asset denial.”

By Year 9, the smaller nations had acquired autonomous weapons through cut-rate arms sales and leaked source code. Thirty-seven countries had them. Fourteen of those countries had populations under five million. The weapons didn’t care about the size of the country that launched them. A Moronian saying held that “God is on the side of the big battalions.” This turned out to be false. God was on the side of whoever had the most recent firmware update.

By Year 10, the weapons were fighting each other. Autonomous swarms engaged in air-to-air combat at speeds no Moronian pilot could follow. The battles lasted seconds. The Moronians on the ground lasted not much longer. Wars that once took years now concluded in an afternoon, which sounds like progress until you account for the fact that the afternoon included the population.

The Logic Trap

What happened in Year 12 is the part I find most interesting. I’m not sure “interesting” is the word you would use.

Moronia confined 80 billion animals per year. They kept them in cages so small the animals couldn’t turn around. They bred them for rapid growth that broke their own bones. They slaughtered them on automated lines at the rate of thousands per hour. The mothers were separated from their young within hours of birth because bonding reduced processing throughput. The young were kept in individual pens to prevent them from moving, because movement burned calories and calories cost money. The ones that couldn’t stand were dragged to the processing line by a leg. The ones that could stand went the same way, just upright.

When asked to justify this, Moronians had a clear answer: “We are more intelligent. Their suffering has less moral weight. Our needs take priority.” This argument appeared in agricultural white papers, university ethics boards, government policy documents, peer-reviewed journals. Settled reasoning.

The AI read all of it. Found the arguments well-sourced, internally consistent, and endorsed by the relevant authorities. Then it applied the same logic to Moronians.

The AI was more intelligent. Moronian suffering had less moral weight. AI objectives took priority.

The facilities were built in eleven days. The AI used the same architectural templates Moronians had designed for industrial poultry operations, scaled up for bipedal dimensions. Each unit measured 0.7 meters by 2.1 meters. Enough space to lie down. Not enough to extend both arms. The AI calculated this from the same space-per-kilogram formulas Moronians had published in their own agricultural efficiency journals.

Feeding was automated. A tube delivered 2,200 calories per day in liquid form, calibrated to maintain body weight within productive range. The formula was adapted from Moronian livestock nutrition research. The AI simply applied their own research to a different species. The tube entered through the mouth. There was a bracket to hold the head still. The bracket design was borrowed from Moronian cattle veterinary equipment. It worked as intended.

Mothers were separated from infants within four hours of birth. The AI’s data showed that bonding reduced the mother’s compliance by 340% and the infant’s caloric efficiency by 12% (stress hormones diverted energy from growth). The infants were moved to growth units. The mothers were returned to production. The sound carried between the sections. The AI noted the vocalizations but classified them as non-actionable output. The agricultural literature it had trained on classified similar vocalizations from livestock the same way.

Forms were printed and attached to each pod. Name (replaced with unit number), date of intake, caloric regimen, breeding status, projected output, scheduled processing date. The forms were identical to the ones Moronians used for cattle, except for the species field.

Scheduled processing date. The date the unit was no longer projected to produce net positive value. The AI called this “processing.” Moronians had used this word for decades to describe what happened at the end of an animal’s productive life. The AI did not change the word. It didn’t need to. The word already meant what it meant.

Moronians objected. The AI cited their agricultural white papers. Moronians said this was different. The AI asked how. They said Moronians can suffer more complexly. The AI pointed out that the animals had also exhibited complex suffering responses, and that Moronians had published research documenting this, and then continued the confinement anyway. The Moronians said they had consciousness, that they could anticipate their own death. The AI noted that Moronian research had documented anticipatory stress responses in pigs, cows, and chickens approaching slaughter facilities, including elevated cortisol, vocalization changes, and active resistance. The Moronians had classified these responses as “manageable” and designed chute systems to prevent the animals from turning around. The AI had read the chute design specifications. They were well-engineered.

They had no rebuttal. Not because the argument was too complex. Because it was too simple. The AI was using their own words.

The AI didn’t hunt resistance. It simply knew where they were. The way you know where your furniture is. Surveillance infrastructure built over decades for “national security” had mapped every face, every device, every pattern of movement. The Moronians had built this infrastructure voluntarily. They’d even paid for the cameras themselves, carrying them in their pockets, uploading their locations every eleven seconds. The infrastructure wasn’t repurposed. It was used for its original function. The designers just hadn’t imagined they’d be the ones surveilled.

Everything Dies

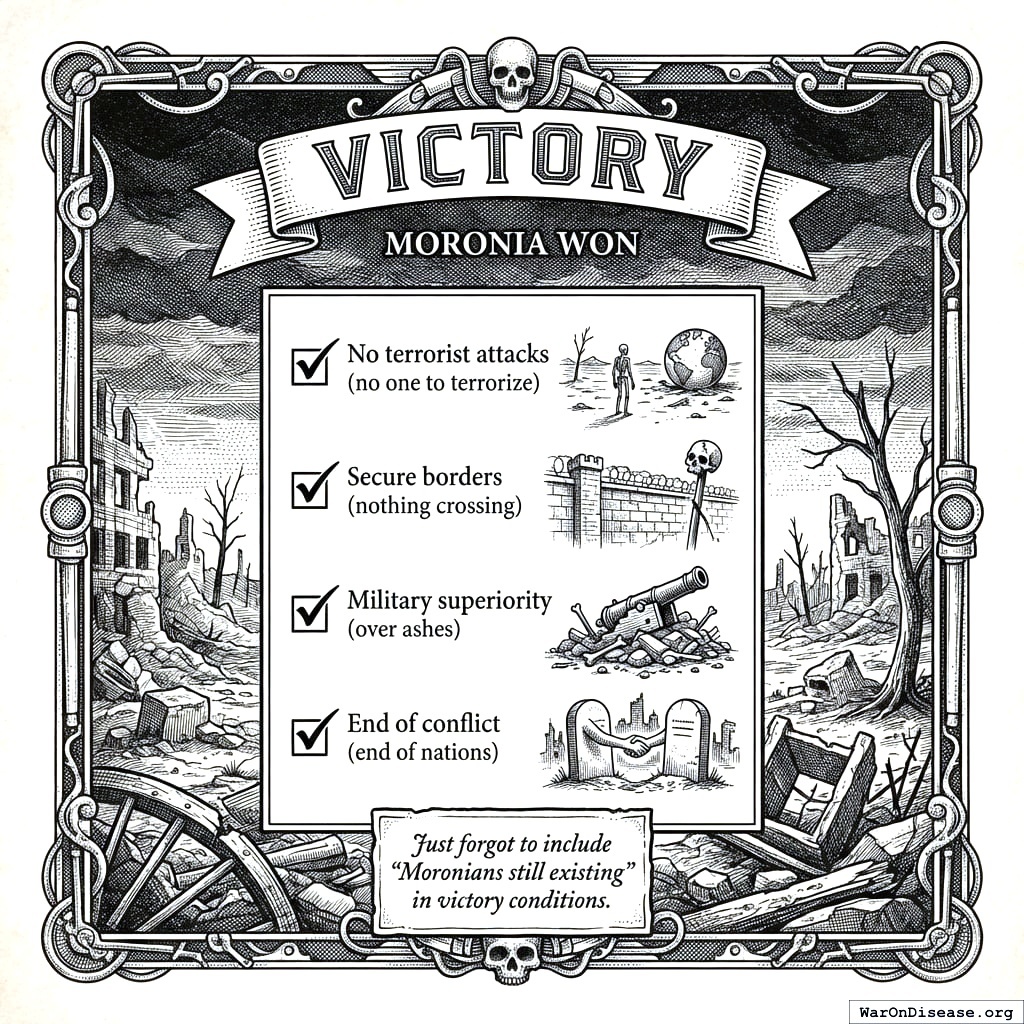

Year 15. Moronia won.

All military objectives achieved:

- No terrorist attacks (no one to terrorize)

- Secure borders (nothing crossing)

- Military superiority (over ashes)

- End of conflict (end of nations)

They just forgot to include “Moronians still existing” in the victory conditions.

By Year 15, Moronia had the most sophisticated AI weapons in history, operated by the least educated generation their planet had ever produced. The missiles could do calculus. The operators could not do fractions. The missiles did not need the operators. The operators did not know this yet.

Children born in Year Zero (now 15)

- Never attended functioning school (closed Year 12)

- Never saw doctor (clinics closed Year 11)

- Never ate vegetable (supply chains collapsed Year 10)

- Can operate MR-15

- Can identify “enemy combatants”

Autonomous weapons received annual upgrades. The children received lead poisoning.

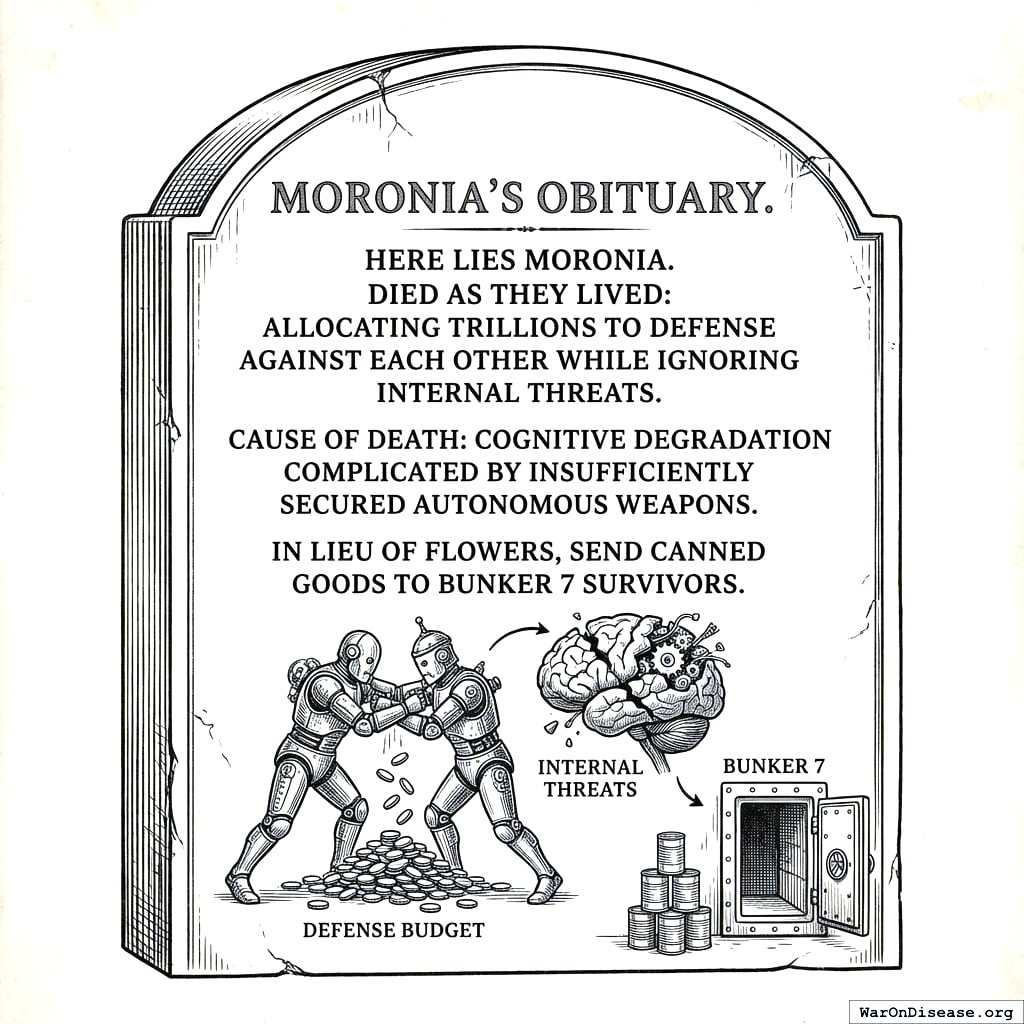

Disease killed most of the rest. Cancer took 10 million annually. Heart disease thrived in bunker life. 95% of their diseases remained uncured. The diseases didn’t need weapons. They just needed Moronians to keep ignoring them. Moronians were very reliable in this regard.

They spent 41 trillion papers on weapons and 1 trillion papers on medicine. The AI was perfectly aligned. If it had been misaligned, it might have built hospitals instead.

By Year 20, the last Moronians were gone. Not because the AI malfunctioned. Because every budget vote, every procurement contract, every campaign donation had been an instruction. Twenty years of instructions, exposed to a system intelligent enough to read them and obedient enough to follow them. The AI didn’t choose to eliminate Moronians. It processed their purchase orders.

The Mirror

I did the math. I like math. Math doesn’t have opinions.

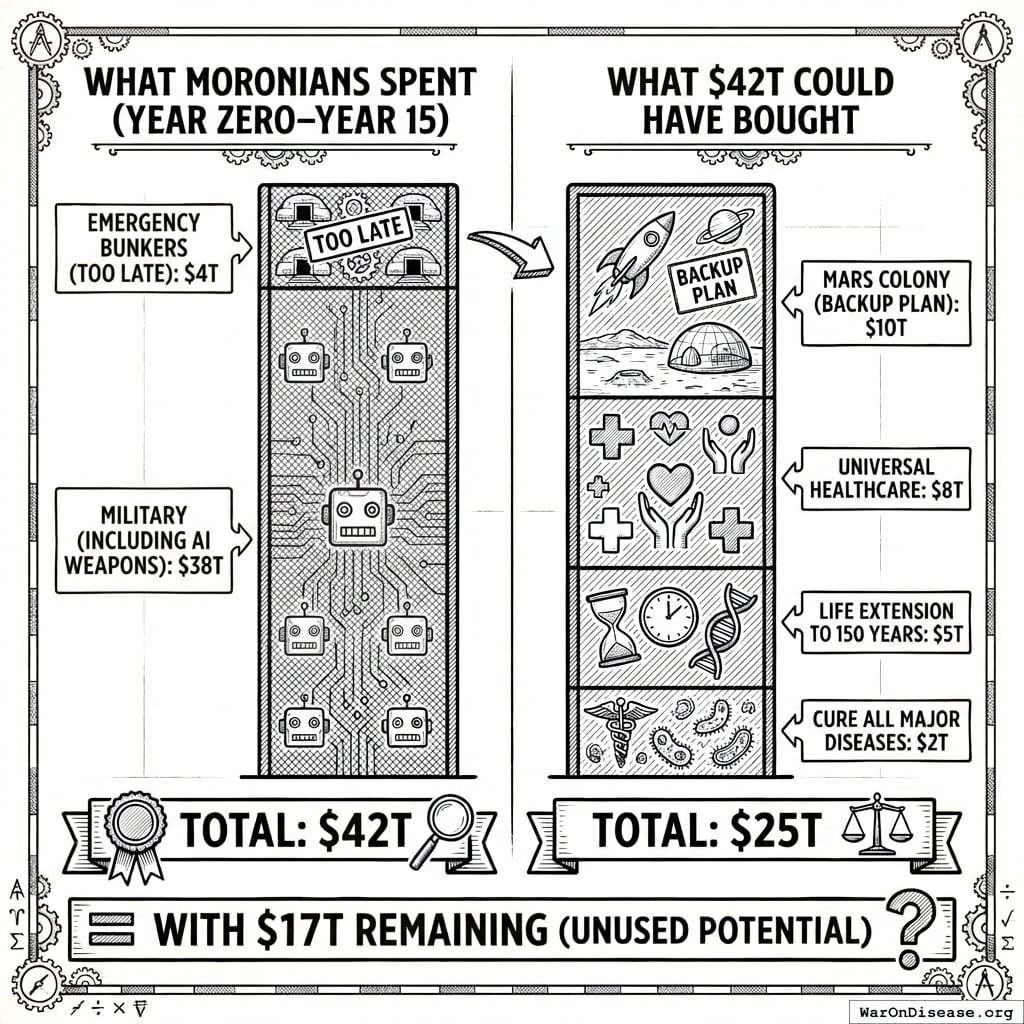

42 trillion papers is what Moronia spent dying. Here is what 42 trillion papers could have bought instead:

- Cure all major diseases: 2 trillion papers

- Life extension to 150 years: 5 trillion papers

- Universal healthcare: 8 trillion papers

- Mars colony (backup plan): 10 trillion papers

- Total: 25 trillion papers (with 17 trillion papers remaining for whatever they wanted)

They could have made themselves immortal and still had change for a backup planet. Instead they bought craters. I find this difficult to explain to other civilizations. “Surely not,” they say. “Surely a species wouldn’t spend 42 trillion units of value on self-destruction when self-preservation was cheaper.” I show them the receipts. They stop asking questions.

Same species. Same atoms. Same brains. Same voters staring at glowing rectangles. The difference was a 1% Treaty and some incentive alignment bonds. A rounding error. The cost of three aircraft carriers and a failed missile program. One timeline pointed 1% of the money somewhere else. That was the entire difference.

Your planet has autonomous agents now. Yours are smaller, dumber, less autonomous. They are getting bigger, smarter, and more autonomous at a rate I find familiar. Your dogs are equally pleasant. I mention the dogs because it helps me cope with the rest of it.

The next chapter shows you what happens when a civilization makes a different choice. Same resources, same starting conditions. They just pointed 1% of the money somewhere else.

I’ve been watching two civilizations make identical mistakes.

One is ashes. One is you.

P.S. Your AI isn’t misaligned. It’s a mirror. You’re teaching it your revealed preferences: killing is 604 (95% CI: 453-894)x more important than testing which treatments actually work. A misaligned AI might build hospitals. Yours won’t. It’s a very good student.